-

随着立体匹配算法[1]和3D传感器[2]的发展,点云数据的重要性日愈凸显[3]。高质量的点云数据是连接虚拟世界和现实世界之间的桥梁。通过对点云数据的处理,可以更好的感知环境信息,其中语义更是可以丰富点云传达的信息。这对于计算机视觉、智能驾驶、遥感测绘、智慧城市等研究方向都有着重大意义。目前点云语义分割方法主要分为三大类:基于块特征的非监督学习的方法、基于传统机器学习的方法和基于深度学习的方法。基于块特征的非监督学习的方法通过对点云进行聚类分割[4-5],然后对分割块进行特征提取,进而建立不同地物的点云分类规则,以实现对每一个分割块类别的判断。该方法对分割块的要求较高。点云之间的密度不均衡,在分割阶段很容易造成过分割和欠分割,这一问题在城市环境中的行道树点云上具有更为明显的体现,由于树冠的遮挡等原因,行道树的树干与树冠的交界处点云密度较低,容易造成过分割等问题,从而影响最终的语义分割精度。基于传统机器学习的方法主要包括基于点特征的传统机器学习方法和基于分割块的传统机器学习方法[6]。基于点特征的传统机器学习方法[7-9]对每一个点在其邻域内提取高维特征,使用高维特征向量训练机器学习模型,从而对每一个点进行分类。由于对点计算高维特征所需计算资源大、计算时间长且丢弃了点与点之间的几何关系,因此,基于分割块的语义分割方法被提出。基于分割块的传统机器学习方法[10-13]通过对地物点进行聚类分割,提取其分割块的特征,构建高维特征向量,训练机器学习模型,最后利用模型对地物进行分类。深度学习自提出以来在图像领域取得巨大成功[14-16],近年来,在点云领域,基于深度学习的方法发展迅速,主要包括基于投影的深度神经网络[17]、基于体素的深度神经网络[18-19]、基于点的深度神经网络[20-24]和基于超点图的深度神经网络[25]等。基于深度学习的方法通过使用大量的训练数据对设计好的深层神经网络进行参数训练,通过对点云提取高层次的特征并对其进行分类,最终实现对点云数据逐点的语义分割。

目前,基于传统机器学习的方法和基于深度学习的方法都需要大量的数据对模型进行训练且需要大量的计算资源,对于较少的点云数据很难去提取大量的样本集合,且城市道路环境中的行道树和杆状物存在样本数量不均衡的问题,这给基于深度学习的方法增加了难度。基于块特征的非监督学习的方法具有计算资源占用小、可解释性强等优势,但是该方法所需特征多、无法处理欠分割和过分割。城市道路环境中杆状物和行道树之间距离较近,容易欠分割;由于遮挡等原因,行道树树干和树冠接触处点云密度低,容易过分割。因此解决欠分割和过分割问题成为提高语义分割效果的关键。

针对上述问题,文中提出一种基于分割块合并策略的点云语义分割方法,适用于城市道路环境下的行道树和杆状物的语义分割。该方法在点云聚类分割的基础上,依据分割块的重心分布设计合并策略,解决了过分割和欠分割导致的语义分割错误的问题。实验表明,对于行道树和杆状物,采用文中合并策略与原始基于分割块的方法相比,取得了更好的语义分割效果。

-

相比于2D图像信息,三维点云可以提供丰富的几何、形状和尺度信息,且不易受光照强度变化等影响,而对点云附加语义信息可以更好地实现环境感知,有利于后续的基于三维点云数据的目标检测、分类和识别等。

由于城市道路环境下的行道树的树干和树冠的交界处的点云密度较低,因此会造成行道树的树冠和树干的过分割,即将一颗行道树分割为多个分割块。为解决直接块分类导致的语义分割错误的问题,文中在DBSCAN[26]聚类分割的基础上,提出了一种基于分割块合并策略的语义分割方法,提高了基于分割块的点云语义分割的精度和召回率,实现了比较好的语义分割效果。文中方法主要分为三步:首先根据几何特征对分割块进行初步分类,然后通过主成分分析提取分割块,最后基于分割块合并策略实现语义分割。

-

对于聚类后的分割块,首先统计每一个分割块的点数。对于建筑物点云,由于其点云密度较大,因此使用DBSCAN聚类算法可以将其聚类为一个或多个点数量大的分割块。通过设置分割块的点数阈值,提取除建筑物和点数小于阈值的分割块之外的地物:

$$cluster = \left\{ {\begin{array}{*{20}{c}} {Buildings,}&{cluste{r^i}.Num \geqslant T{h_{Num1}}} \\ {Interest,}&{\rm otherwise} \\ {Noise,}&{cluste{r^i}.Num \leqslant T{h_{Num2}}} \end{array}} \right.$$ (1) 式中:

$cluste{r^i}. Num$ 代表任意一个分割块的点云数量;$T{h_{Num}}$ 代表分割块的点云数量阈值。通过计算点云簇的重心,首先将感兴趣的点云簇中的行道树和树冠提取出来。对于公式(1)筛选出的

$Interest$ 分割块,包括了除建筑物之外的地物,如行道树、树冠、杆状物、树干和汽车等。行道树、树冠的重心与杆状物、树干等其他地物的重心在空间分布上具有明显差异。因此,通过公式(2)即可提取行道树和树冠。$$Interest = \left\{ {\begin{array}{*{20}{c}} {trees,crowns,}&{{P_{gravith}}.{\textit{z}} \geqslant T{h_1}} \\ {poles,cars,trunks,others,}&{\rm Otherwise} \end{array}} \right.$$ (2) 式中:

${P_{gravith}}.{\textit{z}}$ 代表分割块重心的${\textit{z}}$ 坐标;$T{h_1}$ 代表重心高度阈值。 -

对于杆状物、车、行道树树干等点云簇,进行主成分分析得到不同点云簇的几何特征分布,实现对杆状物、树干和车的分类。

主成分分析法是一种数学方法,通过数据寻找一组相互正交的坐标轴实现数据的降维。首先对点云簇计算重心,如公式(3)所示:

$$\bar X = \frac{1}{n}\sum\limits_{i = 1}^n {{X_i}} $$ (3) 式中:

$n$ 代表当前点云簇的点数量;${X_i}$ 代表当前点云簇的每一个点;$\overline X $ 代表当前点云簇的重心。对点云簇计算协方差矩阵,如公式(4)所示:

$$S = \frac{1}{n}{\sum\limits_{i = i}^n {\left( {{X_i} -\overline X} \right)} ^{\rm T}}\left( {{X_i} -\overline X} \right)$$ (4) 式中:

${X_i}$ 表示点云簇中的每一个点;$\overline X $ 表示点云簇的重心;$n$ 代表点云簇的点数量。对协方差矩阵

$S$ 进行奇异值分解SVD(Singular Value Decomposition),计算特征值和特征向量:$$S = V\left( {\begin{array}{*{20}{c}} {{\lambda _1}}&{}&{} \\ {}&{{\lambda _2}}&{} \\ {}&{}&{{\lambda _3}} \end{array}} \right){V^{\rm T}}$$ (5) 式中:

$S$ 代表协方差矩阵;${\lambda _1}$ 、${\lambda _2}$ 、${\lambda _3}$ 代表特征值;$V$ 是由特征向量组成的矩阵,特征向量顺序与特征值一致。最小特征值${\lambda _3}$ 对应的特征向量为点云簇的主方向。根据特征值计算点云簇的线性程度、点云簇的面性程度[7]和点云的主方向与z轴之间的夹角:$$ {L_\lambda } = \frac{{{\lambda _1} - {\lambda _2}}}{{{\lambda _1}}} $$ (6) $$ {P_\lambda } = \frac{{{\lambda _2} - {\lambda _3}}}{{{\lambda _1}}} $$ (7) $$ \alpha ={\rm{arccos}}\frac{\stackrel{\rightharpoonup }{{n}_{1}}\cdot \stackrel{\rightharpoonup }{{n}_{z}}}{\left|\stackrel{\rightharpoonup }{{n}_{1}}\right|\left|\stackrel{\rightharpoonup }{{n}_{z}}\right|}$$ (8) 式中:

${L_\lambda }$ 代表点云簇的线性程度;${P_\lambda }$ 代表点云簇的面性程度;$\alpha $ 代表向量之间的夹角;$ \stackrel{\rightharpoonup }{{n}_{1}}$ 代表点云簇的主方向;$ \stackrel{\rightharpoonup }{{n}_{z}}$ 代表z轴的方向向量。对于杆状物和树干,因为其分布具有很强的线性性质,反映到特征值上就是

${\lambda _1}\gg$ ${\lambda _2}$ ,且其主方向大致与z轴平行。对于车辆,因为其具有很强的面性性质,反映到特征值上就是${\lambda _2}\gg$ ${\lambda _3}$ ,因此可以通过设置阈值,完成杆状物、树干和车辆的分类:$$\begin{split} &poles,cars,trunks,others =\\ &\left\{ {\begin{array}{*{20}{c}} {poles,trunks,}&{{L_\lambda } \geqslant T{h_L}\& \alpha \leqslant T{h_\alpha }} \\ {cars,}&{{P_\lambda } \geqslant T{h_P}} \\ {others,}&{\rm Otherwise} \end{array}} \right. \end{split}$$ (9) 式中:

$T{h_L}$ 、$T{h_P}$ 和$T{h_\alpha }$ 分别代表线性度阈值、面性度阈值、点云簇主方向与z轴夹角阈值。 -

将所有的点云簇重心投影到水平面上。对杆状物、树干、行道树、树冠计算重心,并将重心向水平面投影,对重心投影点构建kd-tree(k-dimensional tree)。将杆状物和树干的重心投影至二维平面,对投影点进行k近邻检索,如果检索点和邻域点满足公式(10),则将检索点对应的点云簇和邻域点对应的点云簇进行合并。

$$||{P_{query}} - {P_{nearst}}|{|_2} < T{h_{nearst}}\& |{P_{query}}.x - {P_{nearst}}.x| < T{h_{nearst\_x}}$$ (10) 式中:

${P_{query}}$ 代表向水平面投影后检索点;${P_{nearst}}$ 代表检索点的邻域点;$T{h_{nearst}}$ 、$T{h_{nearst\_x}}$ 分别代表欧式距离阈值和在x轴方向上的距离阈值。${\left\| {{P_{query}} - {P_{nearst}}} \right\|_2}$ 为两点之间的欧氏距离,如公式(11)所示:$${\left\| {{P_{query}} - {P_{nearst}}} \right\|_2} = \sqrt {{{\left( {{x_{query}} - {x_{nearst}}} \right)}^2} + {{\left( {{y_{query}} - {y_{nearst}}} \right)}^2}} $$ (11) 合并后的分割块主要为的杆状物和行道树的集合。两类物体的具有明显的区分度,如:不同地物在水平面上的投影面积的不同、最小包围盒的体积不同、点的密度分布不同等,对合并后的分割块,结合杆状物、和行道树的自然特征、空间特征与分类知识建立对应关系,根据每一个聚类后的点云簇的特征,与分类规则进行匹配,最终实现点云数据的低精度语义分割。文中根据地物的高度计算两个高度范围内点云的投影面积之差,从而对行道树和杆状物进行分类:

$$poles,trees = \left\{ {\begin{array}{*{20}{c}} {poles,}&{Are{a_{above}} - Are{a_{below}} \leqslant T{h_{{\rm{Area}}Diff}}} \\ {trees,}&{Are{a_{above}} - Are{a_{below}} > T{h_{{\rm{Area}}Diff}}} \end{array}} \right.$$ (12) 式中:

$Are{a_{above}}$ 、$Are{a_{below}}$ 分别代表当前分割块全部点云和当前分割块下方一定范围内的点云在水平面上的投影面积值;$T{h_{AreaDiff}}$ 代表面积差阈值。对于完成低精度语义分割后的点云,由于公式(1)的原因,会将一些数量小于阈值的分割块看作噪声,为了将这些噪声正确分类,文中对已经完成低精度语义分割的点云构建kd-tree,对公式(1)中的噪声点检索其邻域,将其类别归类为其邻域内点数量最多的类别,最终实现高精度的语义分割。

-

文中选择六组数据,通过设置对比实验,对比直接块分类的语义分割结果和文中方法的语义分割结果从而完成对实验结果的定性分析。对六组数据中的杆状物和行道树计算语义分割精度和召回率,完成对实验结果的定量分析,验证所提方法的有效性。

-

文中采用项彬彬等[11]提供的数据集中的部分数据,并分为六组。该数据集使用SICK LMS511车载激光雷达采集,其尺寸如表1所示,其中X、Y、Z分别代表数据在三个坐标轴上的范围。文中在原始数据集的基础上将七个类别合并为五个类别:建筑物(黄色标记)、行道树(红色标记)、车(棕色标记)、杆状物(粉色标记)、地面(蓝色标记)。

表 1 六组数据的尺寸

Table 1. 6 sets of data size

Item Region1/m Region2/m Region3/m X 102.144 49.873 63.899 Y 25.477 33.699 40.929 Z 29.528 18.742 34.141 Item Region4/m Region5/m Region6/m X 124.013 42.347 91.563 Y 25.068 22.042 32.315 Z 17.065 14.118 33.042 混淆矩阵是一种常用的用于表示分类精度的方法,如表2所示,每一行数值代表了点云在分类后相应类别中预测的数量,每一列数值代表了某类型点云在实际点云相应类型中真实的数量,为了对语义分割结果进行更加客观的评价,通过公式(13)、公式(14)分别计算精度和召回率,从而对语义分割结果进行定量分析,其中精度和召回率越高代表分割结果越好。

$$Acc = \frac{{TP}}{{TP + FP}}$$ (13) $$Recall = \frac{{TP}}{{TP + FN}}$$ (14) 表 2 混淆矩阵

Table 2. Confusion matrix

Item Ground truth Positive Negative Prediction Positive TP FP Negative FN TN -

文中选择六组数据,设置对比实验,验证所提方法的有效性。实验测试中,关键参数为:

$T{h_L}$ =0.9、$T{h_P}$ =0.1、$T{h_\alpha }$ =20°、$T{h_{AreaDiff}}$ =1。 -

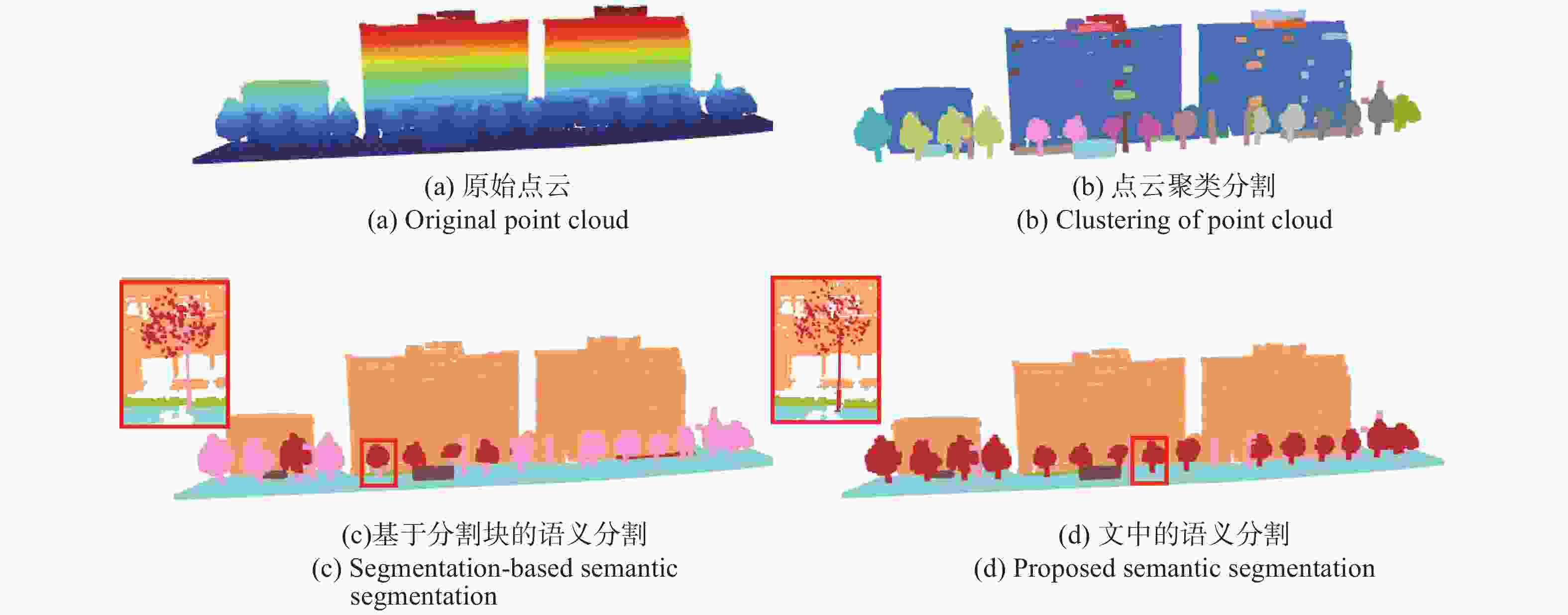

以数据1为例,最终得到的分类结果如图1所示。

图1中图(a)代表原始的点云数据;图(b)代表使用DBSCAN聚类后的点云数据,不同的颜色代表不同的分割块,这些分割块是语义分割的基础;图(c)为未使用合并策略直接对分割块进行分类的语义分割结果;图(d)代表文中所提方法的语义分割结果。通过实验可以看出,图(c)中由于没有对聚类后的分割块进行合并,导致一些行道树以及行道树的树干被识别为了杆状物,最终得到了错误的语义分割结果。更进一步,针对同一棵树分割而言,图(c)中树干被错误地分割为杆状物(图(c)中红框中树木的树干为粉色),而图(d)中使用了文中所提块合并策略,在特征提取和分类之前完成了树干和树冠的合并,所以很好地对行道树完成了整体分割。

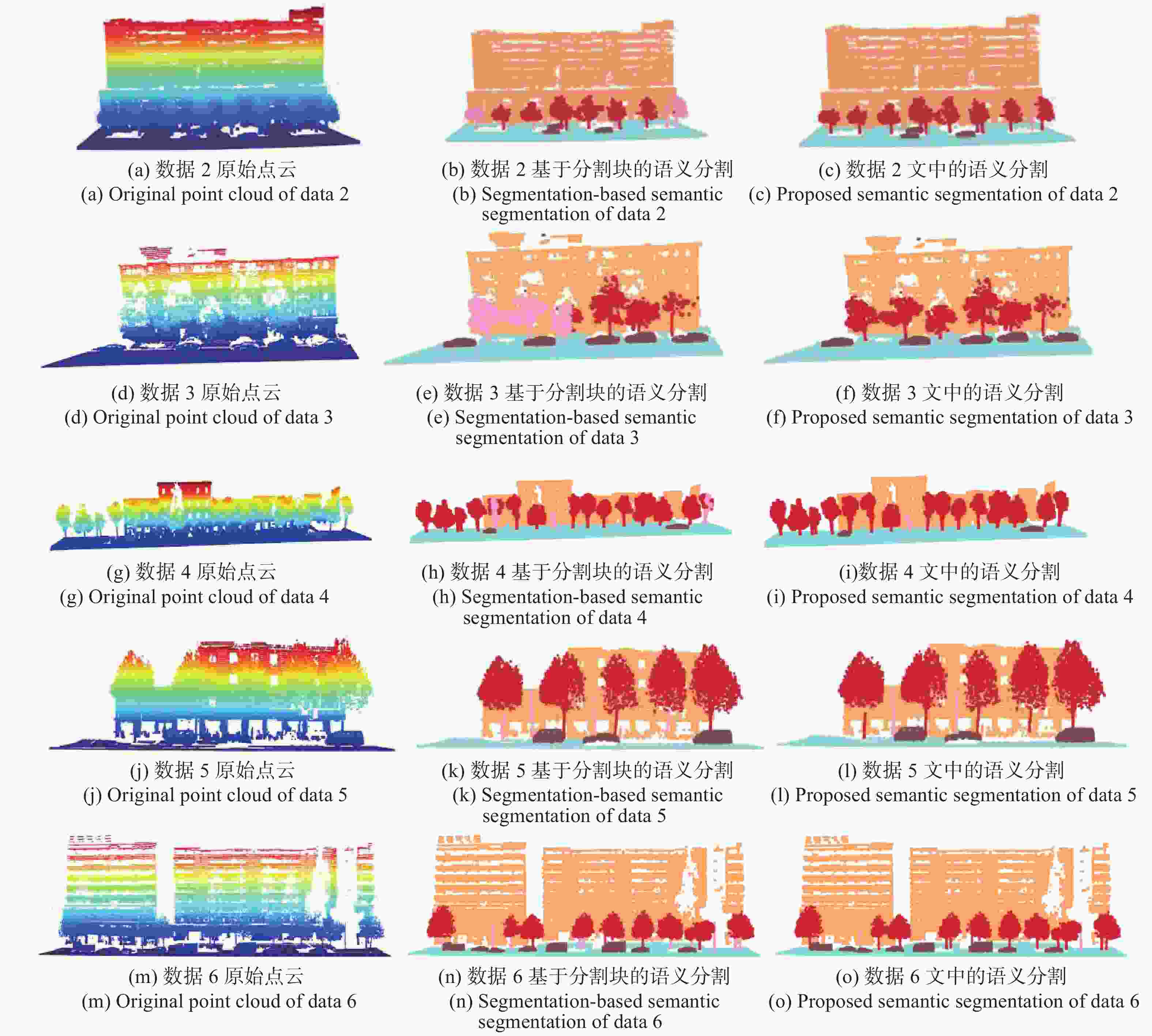

同样的,其他五组数据的语义分割结果如图2所示,每一行代表不同的数据,通过第三列与第二列对比可以看出,通过采用文中所提块合并策略改善了最终的语义分割结果。

图 2 数据2、数据3、数据4、数据5、数据6使用合并策略前后语义分割对比

Figure 2. Comparison of semantic segmentation of data 2, data 3, data 4 data 5 and data 6 before and after using the merge strategy

分别计算六组场景中不采用文中所提合并策略、采用文中所提合并策略的杆状物和行道树的语义分割精度和召回率,如下:

表 4 行道树精度、召回率

Table 4. Trees accuracy and recall

Method Region1 Region2 Region3 Acc Recall Acc Recall Acc Recall Original 62.0% 12.7% 87.9% 76.2% 100% 45.4% Proposed 99.6% 99.9% 99.8% 100% 100% 99.7% Method Region4 Region5 Region6 Acc Recall Acc Recall Acc Recall Original 99.4% 96.2% 100% 98.8% 99.7% 95.1% Proposed 99.4% 100% 100% 100% 99.7% 100% 由于数据3中不包括杆状地物,因此不计算精度和召回率。由表3、4可以看出,对于每一个场景,通过使用文中提出的合并策略,杆状物和行道树的精度、召回率都得到显著提升。在一些细节方面,对于数据1,如图3(a)所示,由于场景中存在路牙石和杆状物相连的点云,使用DBSCAN聚类无法准确地分割开来。因此,实验测试中,采用文中提出的合并策略也难以提高杆状物的语义分割精度;对于数据4,如图3(b)所示,由于其杆状物被行道树遮挡,难以在聚类分割时将其分离开来,进而将杆状物识别分类为行道树,所以最终杆状物的召回率较低。

表 3 杆状物精度、召回率

Table 3. Poles accuracy and recall

Method Region1 Region2 Region3 Acc Recall Acc Recall Acc Recall Original 6.9% 89.1% 5.4% 81.0% - - Proposed 49.7% 95.2% 100% 87.1% - - Method Region4 Region5 Region6 Acc Recall Acc Recall Acc Recall Original 5.8% 27.8% 61.0% 100% 21.2% 80.3% Proposed 100% 27.8% 100% 100% 100% 80.3% -

为了进一步验证文中所提合并策略的有效性,对比了SVM(Support Vector Machine)直接对分割块进行分类的方法和采用合并策略后再使用SVM进行分类两种方法的分割结果。试验选取的特征为分割块的线性度和空间散度,其中线性度的计算公式为公式(6)所示。与线性度相似,空间散度的计算公式如下:

$${S_\lambda } = \frac{{{\lambda _3}}}{{{\lambda _1}}}$$ (15) 式中:

${\lambda _1}$ 、${\lambda _3}$ 分别代表当前分割块的最大特征值和最小特征值。以数据1为例,对其中合并后的行道树和杆状物计算线性度和空间散度,其特征分布如图4所示。其中,横轴代表分割块的线性度,纵轴代表分割块的空间散度,绿色的点代表合并后的行道树的特征分布,红色的点代表合并后的杆状物的特征分布。通过特征分布图可以发现行道树的空间散度普遍大于杆状物的空间散度且行道树的线性度绝大部分低于杆状物的线性度,这表明合并后的行道树和杆状物具有很强的可分性。

使用基于SVM的方法对文中六个区域内的行道树和杆状物进行试验,分别测试使用文中合并策略和不使用文中合并策略的分割效果,其结果如图5所示,每一行代表不同的数据,中间列代表直接使用SVM进行分类的结果,右侧列代表采用文中合并策略后再使用SVM进行分类的结果,可以发现,在直接对分割块使用SVM分类器得到的结果中有一些行道树的树干被分类为了杆状物,通过采用文中合并策略后改善了这种分类错误。为了定量分析实验结果,分别计算了基于SVM的两种分类方法最终的分割精度和召回率,如表5~6所示。

图 5 数据1、数据2、数据3、数据4、数据5、数据6使用合并策略前后语义分割对比

Figure 5. Comparison of semantic segmentation of data 1, data 2, data 3, data 4, data 5 and data 6 before and after using the merge strategy

表 5 杆状物精度、召回率

Table 5. Poles accuracy and recall

Method Region1 Region2 Region3 Acc Recall Acc Recall Acc Recall Original 42.9% 95.2% 20.4% 87.1% - - Proposed 49.4% 95.2% 100% 87.1% - - Method Region4 Region5 Region6 Acc Recall Acc Recall Acc Recall Original 24.9% 27.8% 61% 100% 33.2% 80.3% Proposed 100% 27.8% 100% 100% 100% 94.4% 表 6 行道树精度、召回率

Table 6. Trees accuracy and recall

Method Region1 Region2 Region3 Acc Recall Acc Recall Acc Recall Original 99.6% 97.7% 99.8% 95.4% 100% 99.3% Proposed 99.6% 99.9% 99.8% 100% 100% 99.7% Method Region4 Region5 Region6 Acc Recall Acc Recall Acc Recall Original 99.4% 99.3% 100% 98.8% 99.7% 96.9% Proposed 99.4% 100% 100% 100% 99.9% 100% 通过分析发现,使用文中所提的合并策略后,杆状物的分割精度和行道树的召回率大多数都提高到了一个较高的值。对于数据1中杆状物分割精度与数据4中召回率仍然较低的原因与2.2.1节分析的原因相同。

采用分割块合并策略进行分类,实现低精度的语义分割后,原始点云中存在一些分割块没有获得标签,使用KNN(K-Nearest Neighbor)插值优化可以解决这一问题。通过对分类后的点构建kd-tree,对没有获取标签的点检索k近邻,将其类别归为k个近邻中数量最多的类别,最终达到高精度语义分割的效果。以数据1为例,插值优化前后的结果如图6所示,图(a)中蓝色点为优化前点云中无类别的点,对这些无类别的蓝色点使用KNN插值优化,从图(b)中可以看出,经过KNN插值优化后,语义分割效果得到了提升。

-

文中提出了一种基于分割块合并策略的点云语义分割方法,通过对聚类分割结果进行合并、分类,实现行道树和杆状物的语义分割。此外,文中对语义分割后的点云进行插值优化,改善了语义分割结果。

为了验证所提方法的有效性,分别基于规则匹配的分类方法和SVM分类的方法对所提合并策略进行验证,实验结果表明,与原始方法相比,针对城市道路环境下的行道树和杆状物,通过采用文中所提方法可将其召回率和精度平均提升至89.9%以上。文中所提方法在适用场景上仍具有限制,对于一些特别的室外场景,如:不同类别的地物紧密连接、杆状物被行道树遮挡等都会影响点云的聚类分割,进而导致最终的语义分割精度和召回率较低。因此,如何在复杂的室外场景中解决地物之间的相互遮挡以实现高精度的语义分割仍是未来需要深入研究的问题。

Point cloud semantic segmentation method based on segmented blocks merging

-

摘要: 随着激光雷达等三维点云获取工具的快速发展,点云的语义信息在计算机视觉、智能驾驶、遥感测绘、智慧城市等领域更具重要意义。针对基于分割块特征匹配的点云语义分割方法无法处理过分割和欠分割点云块、行道树和杆状物的语义分割精度低等问题,提出了一种基于分割块合并策略的行道树和杆状物点云语义分割方法,该方法可对聚类分割后感兴趣的分割块进行合并,通过计算其多维几何特征实现对合并后的物体分类,并使用插值优化算法对分割结果进行优化,最终实现城市道路环境下行道树和杆状物的语义分割。实验结果表明,所提方法可将城市道路环境下的行道树、杆状物等点云数据的召回率和语义分割精度平均提升至89.9%以上。基于分割块合并的语义分割方法,可以很好地解决城市道路下行道树和杆状物语义分割精度低等问题,该方法对于三维场景感知等问题的研究具有重要意义。Abstract: With the rapid development of three-dimensional point cloud acquisition tools such as Light Detection And Ranging (LiDAR), the semantic information of point clouds has become more and more important in computer vision, intelligent driving, remote sensing mapping and smart cities. Aiming at the limitations of the point cloud semantic segmentation method based on segmented block feature matching, such as cannot handle the over-segmentation and under-segmentation, the semantic segmentation accuracy of street trees and rods is low, a point cloud semantic segmentation method of street trees and rods based on the segmented blocks merging strategy was proposed, which could merge interested segmented blocks after density-based spatial clustering of applications with noise (DBSCAN) clustering segmentation, and the merged objects were classified by calculating their multi-dimensional geometric features, then the semantic segmentation results were optimized by the interpolation optimization algorithm, and finally the semantic segmentation of street trees and rods in the urban road environment was realized. The experimental results show that the method proposed can improve the recall rate and semantic segmentation accuracy of point cloud data such as street trees and rods in an urban road environment to more than 89.9%. The semantic segmentation method based on segmentation merging can well solve the problem of low accuracy of semantic segmentation of street trees and rods under urban roads. This method is of great significance for the research of three-dimensional scene perception and other problems.

-

Key words:

- LiDAR /

- 3D point-cloud /

- semantic segmentation /

- block merge /

- street tree

-

表 1 六组数据的尺寸

Table 1. 6 sets of data size

Item Region1/m Region2/m Region3/m X 102.144 49.873 63.899 Y 25.477 33.699 40.929 Z 29.528 18.742 34.141 Item Region4/m Region5/m Region6/m X 124.013 42.347 91.563 Y 25.068 22.042 32.315 Z 17.065 14.118 33.042 表 2 混淆矩阵

Table 2. Confusion matrix

Item Ground truth Positive Negative Prediction Positive TP FP Negative FN TN 表 4 行道树精度、召回率

Table 4. Trees accuracy and recall

Method Region1 Region2 Region3 Acc Recall Acc Recall Acc Recall Original 62.0% 12.7% 87.9% 76.2% 100% 45.4% Proposed 99.6% 99.9% 99.8% 100% 100% 99.7% Method Region4 Region5 Region6 Acc Recall Acc Recall Acc Recall Original 99.4% 96.2% 100% 98.8% 99.7% 95.1% Proposed 99.4% 100% 100% 100% 99.7% 100% 表 3 杆状物精度、召回率

Table 3. Poles accuracy and recall

Method Region1 Region2 Region3 Acc Recall Acc Recall Acc Recall Original 6.9% 89.1% 5.4% 81.0% - - Proposed 49.7% 95.2% 100% 87.1% - - Method Region4 Region5 Region6 Acc Recall Acc Recall Acc Recall Original 5.8% 27.8% 61.0% 100% 21.2% 80.3% Proposed 100% 27.8% 100% 100% 100% 80.3% 表 5 杆状物精度、召回率

Table 5. Poles accuracy and recall

Method Region1 Region2 Region3 Acc Recall Acc Recall Acc Recall Original 42.9% 95.2% 20.4% 87.1% - - Proposed 49.4% 95.2% 100% 87.1% - - Method Region4 Region5 Region6 Acc Recall Acc Recall Acc Recall Original 24.9% 27.8% 61% 100% 33.2% 80.3% Proposed 100% 27.8% 100% 100% 100% 94.4% 表 6 行道树精度、召回率

Table 6. Trees accuracy and recall

Method Region1 Region2 Region3 Acc Recall Acc Recall Acc Recall Original 99.6% 97.7% 99.8% 95.4% 100% 99.3% Proposed 99.6% 99.9% 99.8% 100% 100% 99.7% Method Region4 Region5 Region6 Acc Recall Acc Recall Acc Recall Original 99.4% 99.3% 100% 98.8% 99.7% 96.9% Proposed 99.4% 100% 100% 100% 99.9% 100% -

[1] Du Ruijian, Ge Baozhen, Chen Lei. Texture mapping of multi-view high-resolution images and binocular 3D point clouds [J]. Chinese Optics, 2020, 13(5): 1055-1064. (in Chinese) doi: 10.37188/CO.2020-0034 [2] Wei Yu, Jiang Shilei, Sun Guobin, et al. Design of solid-state array laser radar receiving optical system [J]. Chinese Optics, 2020, 13(3): 517-526. (in Chinese) doi: 10.3788/CO.2019-0166 [3] Li Yong, Tong Guofeng, Yang Jingchao, et al. 3D point cloud scene data acquisition and its key technology for scene understanding [J]. Laser & Optoelectronics Progress, 2019, 56(4): 13-26. (in Chinese) doi: 10.3788/LOP56.040002 [4] Zhang Xitong, Li Yongqiang, Mao Jie, et al. Segmentation of connected street trees from mobile LiDAR data [J]. Science of Surveying and Mapping, 2016, 41(8): 111-115. (in Chinese) doi: 10.16251/j.cnki.1009-2307.2016.08.023 [5] Feng Yicong, Cen Minyi, Zhang Tonggang. Knowledge-based automatic objects classification from mobile LiDAR data [J]. Computer Engineering and Applications, 2016, 52(5): 122-126. (in Chinese) doi: 10.3778/j.issn.1002-8331.1403-0361 [6] Xie Yuxing, Tian Jiaojiao, Zhu Xiaoxiang. Linking points with labels in 3D: A review of point cloud semantic segmentation [J]. IEEE Geoence and Remote Sensing Magazine, 2020, 8(4): 38-59. doi: 10.1109/MGRS.2019.2937630 [7] Weinmann M, Jutzi B, Hinz S, et al. Semantic point cloud interpretation based on optimal neighborhoods, relevant features and efficient classifiers [J]. ISPRS Journal of Photogrammetry and Remote Sensing, 2015, 105: 286-304. doi: 10.1016/j.isprsjprs.2015.01.016 [8] Li Haiting, Wang Houzhi, Li Yanhong, et al. Object recognition for vehicle-borne LiDAR point clouds based on SVM [J]. Science of Surveying and Mapping, 2016, 41(5): 45-49. (in Chinese) doi: 10.16251/j.cnki.1009-2307.2016.05.010 [9] Liu Zhiqing, Li Pengcheng, Guo Haitao, et al. Airborn LiDAR point cloud data classification based on relevance vector machine [J]. Infrared and Laser Engineering, 2016, 45(S1): S130006. (in Chinese) doi: 10.3788/IRLA201645.S130006 [10] Tong Guofeng, Du Xiance, Li Yong. et al. Three-dinmensional point cloud classification of large outdoor scenes based on vertical slice sampling and centroid distance histogram [J]. Chinese Journal of Lasers, 2018, 45(10): 1004001. (in Chinese) doi: 10.3788/CJL201845.1004001 [11] Xiang B, Yao J, Lu X, et al. Segmentation-based classification for 3D point clouds in the road environment [J]. International Journal of Remote Sensing, 2018, 39(19): 6182-6212. doi: 10.1080/01431161.2018.1455235 [12] Tang Yong, Xiang Zheng, Jiang Tengping, et al. Semantic classification of pole-like traffic facilities in complex road scenes based on LiDAR point cloud [J]. Tropical Geography, 2020, 40(5): 893-902. (in Chinese) doi: 10.13284/j.cnki.rddl.003263 [13] Li Pengpeng, Li Yongqiang, Zhao Shangbin, et al. Automatic classification of pole-like objects in road scene by back propagation neural network [J]. Bulletin of Surveying and Mapping, 2020(4): 101-105, 120. (in Chinese) [14] Zhang Zhongyu, Liu Yunpeng, Wang Sikui, et al. Vehicle target recognition algorithm for UAV image based on DRFP [J]. Infrared and Laser Engineering, 2019, 48(S2): S226001. (in Chinese) doi: 10.3788/IRLA201948.S226001 [15] Liang Xinkai, Song Chuang, Zhao Jiajia. Depth estimation technique of sequence image based on deep learning [J]. Infrared and Laser Engineering, 2019, 48(S2): S226002. (in Chinese) doi: 10.3788/IRLA201948.S226002 [16] Yang Qili, Zhou Binghong, Zheng Wei, et al. Trajectory detection of small targets based on convolutional long short-term memory with attention mechanisms [J]. Optics and Precision Engineering, 2020, 28(11): 2535-2548. (in Chinese) doi: 10.37188/OPE.20202811.2535 [17] Milioto A, Vizzo I, Behley J, et al. RangeNet++: Fast and accurate LiDAR semantic segmentation[C]//2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE, 2019: 4213-4220. [18] Graham B, Engelcke M, Van Der Maaten L. 3D semantic segmentation with submanifold sparse convolutional networks[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2018: 9224-9232. [19] Meng H Y, Gao L, Lai Y K, et al. Vv-net: Voxel vae net with group convolutions for point cloud segmentation[C]//Proceedings of the IEEE International Conference on Computer Vision, 2019: 8500-8508. [20] Wang Yue, Sun Yongbin, Liu Ziwei, et al. Dynamic graph cnn for learning on point clouds [J]. ACM Transactions on Graphics, 2019, 38(5): 1-12. doi: 10.1145/3326362 [21] Ma L, Li Y, Li J, et al. Multi-scale point-wise convolutional neural networks for 3D object segmentation from LiDAR point clouds in large-scale environments [J]. IEEE Transactions on Intelligent Transportation Systems, 2019, 22(2): 821-836. doi: 10.1109/TITS.2019.2961060 [22] Hu Q, Yang B, Xie L, et al. RandLA-Net: Efficient semantic segmentation of large-scale point clouds[C]//2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), IEEE, 2020: 11105-11114. [23] Feng M, Zhang L, Lin X, et al. Point attention network for semantic segmentation of 3D point clouds [J]. Pattern Recognition, 2020, 107: 107446. doi: 10.1016/j.patcog.2020.107446 [24] Yang Jun, Dang Jisheng. Recognition and segmentation of three-dimensional point cloud based on deep cascade convolutional neural network [J]. Optics and Precision Engineering, 2020, 28(5): 1187-1199. (in Chinese) doi: 10.3788/OPE.20202805.1187 [25] Landrieu L, Simonovsky M. Large-scale point cloud semantic segmentation with superpoint graphs[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2018: 4558-4567. [26] Ester M, Kriegel H P, Sander J, et al. Density-based spatial clustering of applications with noise[C]//Proceedings of 2nd International Conference on Knowledge Discovery and Data Mining, 1996, 240: 6. -

下载:

下载: