-

蜂窝夹层结构复合材料由于其优异的物理性质被广泛应用于航空航天飞行器的主次承力结构中,由于夹层结构制备工艺复杂以及常在较为恶劣的服役环境中工作,导致其易产生分层、脱粘、积水、堵胶等缺陷[1-2],其中最为常见的是蒙皮与胶层、胶层与蜂窝芯的脱粘缺陷。这些缺陷或损伤通常面积较小、相互不连续、比较隐蔽,因此在导致材料失效前并不易被发现,但当材料内部或外部对试件施加干扰时,例如外部震动、冲击、内应力等突然出现,对结构造成致命性威胁,严重影响了相关构件的正常使用,威胁人员安全,造成一定的经济损失,因此如何能够快速、高效和准确地检测与识别出GFRP/NOMEX蜂窝夹层结构的内部缺陷已经成为相关领域的研究热点和难点。

红外无损检测[3-5]近年来发展迅速,相较于传统的检测方法具有结果直观、检测面积大等优点。其物理基础是扫描记录或观察被检构件表面上由于缺陷与材料不同热物理特性所引起的温度变化,从而实现缺陷的检测。其中光脉冲红外热成像是研究最多和使用最广的方法之一,即向试件表面注入高能脉冲,使用红外热像仪记录试件表面温度变化,根据热传导理论得到缺陷相关信息。对于不同类型的缺陷,红外图像上所展示的信息各不相同,其本质是由于不同类型缺陷热物性参数不同,受激励后其表面温度场不同,即产生冷点或热点,最终通过将红外热图的温度转化为颜色进行可视化。

针对复合材料的缺陷检测,近年来多使用机器视觉代替人类视觉的方法,通过对数据进行标注,采用深度学习进行模型训练来实现缺陷的识别,成本低,且具有较强的高效性和灵活性。深度卷积神经网络[6]是一种经典的多层构架前馈人工神经网络,具有较强的特征提取能力、准确率高、鲁棒性好,识别效率高等优点,因此近年来被广泛应用于缺陷分类、目标检测等图像处理任务。但成熟的卷积神经网络模型通常较为复杂,需学习参数较多,导致其训练复杂,耗时较多,且需要大量的训练样本,同时对硬件要求高。

迁移学习[7-8]是借助已有领域的知识对不同但相关领域问题求解的机器学习方法。将已训练好的网络进行调整使其适应新的任务,无需将网络从头训练,且对硬件要求低,训练速度快,因此近年来迁移学习被广泛应用于诸多领域,并且都达到了较好的效果。

-

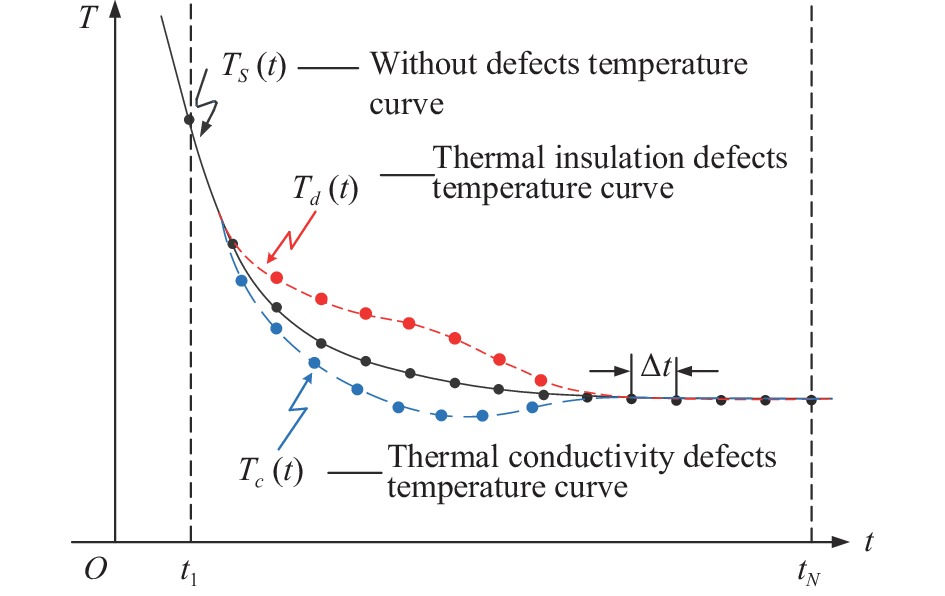

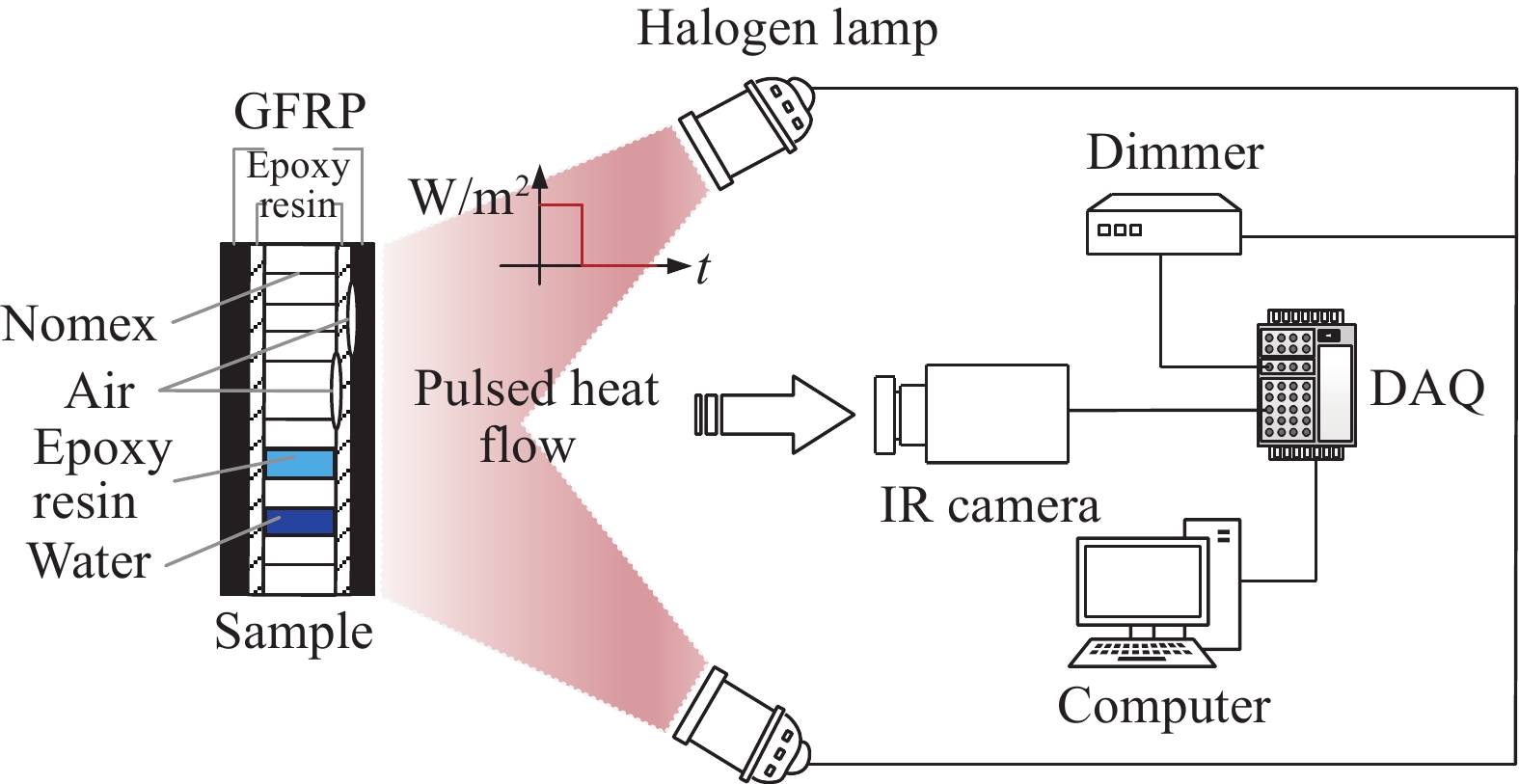

采用FLIR A655 SC红外热像仪、聚光卤素灯、调光器、数据采集卡、LabVIEW软件搭建反射式脉冲红外热成像无损检测系统,如图1所示为原理图,通过LabVIEW软件产生脉冲波形,信号经数据采集卡传至调光器,调光器接收信号控制卤素灯对GFRP/NOMEX蜂窝夹层结构试件进行主动脉冲热激励,由于缺陷处与无缺陷处热物性参数存在差异,因此不同类型缺陷对应试件表面温度表现不同,根据缺陷传热能力的差异可将缺陷分为隔热缺陷与导热缺陷,隔热缺陷处对应表面温度高于无缺陷处,即试件表面产生热点,而导热缺陷与之相反,试件表面产生冷点,不同类型缺陷反射式红外热波检测传热过程如图2所示,不同类型缺陷处对应表面温度曲线变化如图3所示。

-

被检测试件为含有不同缺陷类型的GFRP/NOMEX蜂窝夹层结构,分别记为#A1、#A2、#A3。试件外形尺寸均为160 mm×140 mm×5.2 mm,上下蒙皮为厚度0.5 mm的玻璃纤维增强复合材料,通过上下两层0.1 mm环氧树脂与4 mm的芳纶纸蜂窝粘结而成,3个试件内含有堵胶、积水、上蒙皮与胶膜脱粘(记为“脱粘1”)及胶膜与蜂窝脱粘(记为“脱粘2”)共4类缺陷。其中采用打平底盲孔的方式模拟脱粘缺陷;向蜂窝内注水及环氧树脂模拟积水及堵胶缺陷。模拟缺陷试件如图4所示,试件的具体缺陷类型见表1。

表 1 试件缺陷类型

Table 1. Defects type of specimen

Column test

specimenThe first

columnThe second column The third column The fourth column #A1 Water accumulation defects Plugging

glue defectsPlugging

glue defectsPlugging

glue defects#A2 Water accumulation defects Plugging glue defects Bonding

defects 1- #A3 Water accumulation defects Bonding

defects 2Bonding

defects 1- -

卤素灯向试件表面发出脉冲热激励,热流在试件内部传播流动,试件内部的缺陷将会对热流的传播产生影响,其中脱粘缺陷为隔热缺陷,实际为胶膜与Nomex和GFRP蒙皮脱粘,即试件层间存在空气,而空气的导热系数较小,热流传播受到阻碍,反应在试件表面为热点。积水缺陷实际为服役过程中蜂窝内部进水,堵胶缺陷为蜂窝夹层结构加工过程中局部环氧树脂过量,导致蜂窝内存在环氧树脂。健康试件蜂窝内部的介质为空气,当热流向内部传播时,由于水和胶的导热系数都远大于空气,导致试件表面产生冷点。红外热像仪实时记录试件表面激励及散热过程温度变化,最后通过颜色对试件表面温度场分布进行可视化。

设置脉宽为15 s,卤素灯功率为1800、1900、2000 W,采样频率为20 Hz,采集时间为30 s,对GFRP/NOMEX蜂窝夹层结构进行热成像检测试验。

-

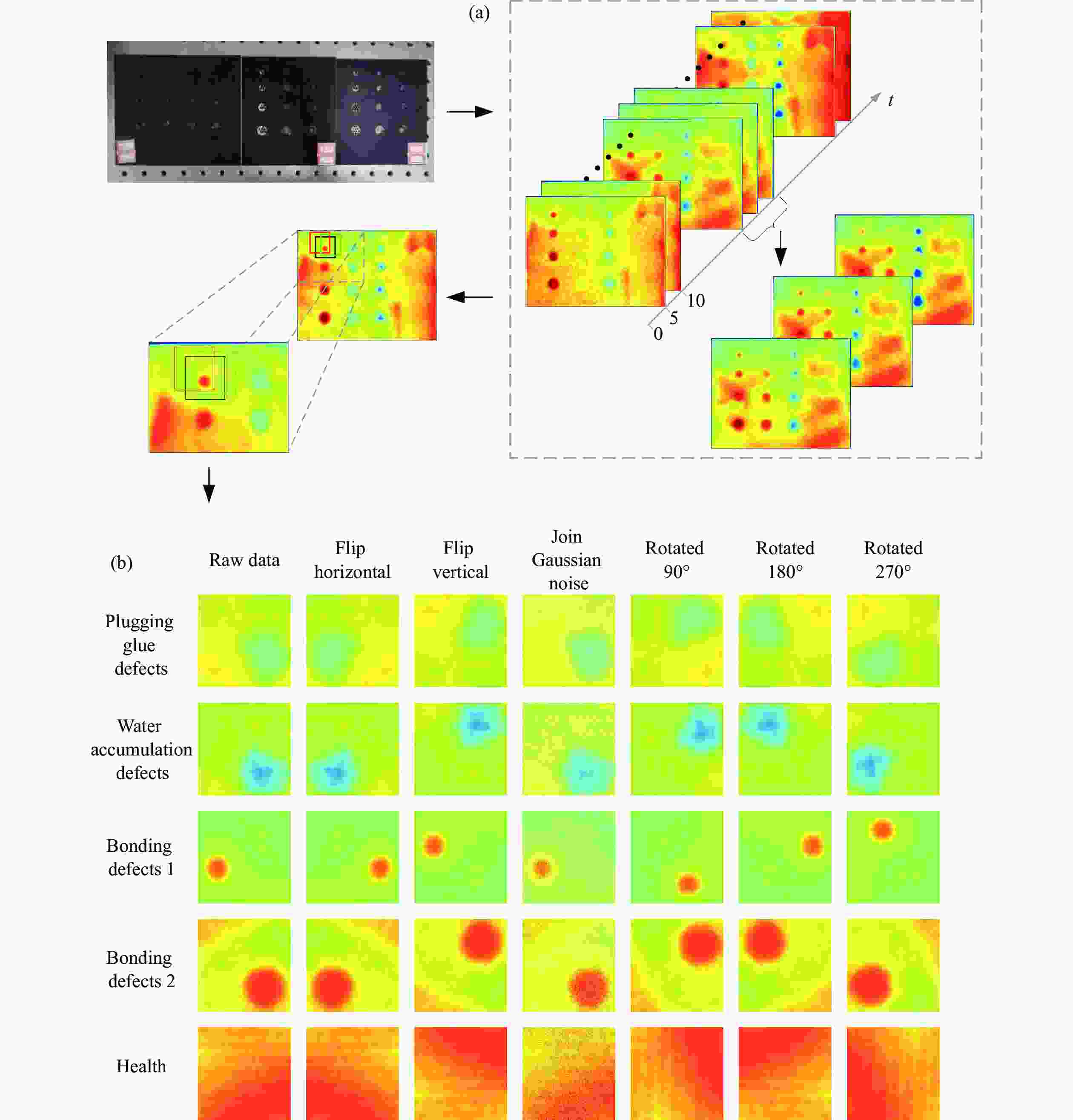

通过红外热像仪采集到的图像大小为480 pixel×640 pixel,共计600帧热图,激励脉宽为15 s,其中前300帧为升温阶段,后300帧为散热阶段。由于试件表面温度达到峰值附近的热图中缺陷对比度较高,冗余噪声少,缺陷信息较为明显,因此取其中若干帧热图对缺陷及健康区域采样,建立5分类深度学习数据集。为保证缺陷信息的完整,最终采样大小为90 pixel×90 pixel的缺陷和健康图像,数据采样过程如图5所示。

-

由于试件以及其缺陷种类、个数有限,为了保证网络训练精度以及防止深度学习网络在学习过程中出现过拟合现象,现对已有数据集进行图像扩增,采用旋转90°、180°、270°、水平翻转、竖直翻转和加入高斯噪声操作,提高模型泛化能力,扩增过程如图5所示。经过扩增后的红外图像数据集共计8064幅图像样本,数据集各类型样本数及占比见表2。

表 2 红外图像数据集缺陷种类样本数

Table 2. The number of samples of different types of defects in the infrared image data set

Types of defects Plugging glue defects Water accumulation defects Bonding defects 1 Bonding defects 2 Health Sample size 2457 2079 1512 756 1260 Proportion 30.5% 25.8% 18.8% 9.4% 15.6% -

将扩充后的数据集进行随机划分,将扩充后数据集的60%作为训练集,用于学习网络权重及偏置;20%作为网络训练的验证集,不参与梯度下降过程,只为调整网络时确定超参数提供参考;20%作为模型在未见数据上的模型评估,即测试集。

-

卷积神经网络是建立在多层感知机上的一种前馈神经网络,具有强大的特征提取能力,因此被大量应用于处理视觉任务,例如缺陷检测、目标识别、语义分割等。此外,卷积神经网络具有权值共享特质,对于处理上百万的图像数据时,传统全连接神经网络需要大量的参数进行学习,而卷积神经网络则极大的节约计算资源,有效提高训练速度。

卷积神经网络处理图像数据时,首先通过多个卷积层、激活函数、池化层提取特征信息,再经过全连接层对结果进行识别分类,此过程为卷积神经网络的正向传播。将预测结果与目标标签比较,计算误差得到损失函数,损失函数作为一个监督信号指导修改网络模型参数,即通过将网络的权值和偏置向损失函数梯度下降的方向更新调整,此过程为反向传播,最大程度减小误差,不断迭代更新网络参数,以此完成网络的学习。

1)卷积层

卷积操作用于图像特征的提取,通过卷积核在特征图上移动,依次与特征图进行卷积操作,整张图像共用一个卷积核,共享其内部的权值与偏置,一个卷积核只能提取一个特征,因此为了提取一张图片的多个特征,增加卷积神经网络的表达能力,每个卷积层内都有多个卷积核。积水及脱粘缺陷经卷积后的特征图可视化如图6所示。

2)激活层

神经网络通过激活层为网络添加非线性因素,为卷积的结果做非线性映射,若网络没有激活层,则无论有多少个隐含层,网络都是线性映射,无法解决非线性问题。ReLU线性整流函数是常用的激活函数,具体表达式如公式(1)所示。

$$ f(x) = \max (0,x) $$ (1) ReLU具有单侧抑制性,也就是当负值输入时,输出为零,即此神经元不会被激活,正值输入时则不变,使得神经网络中的神经元也具有了稀疏激活性,更好使网络拟合训练数据。同时ReLU由于非负区间的梯度为常数,不会出现梯度消失问题,使模型稳定收敛。

3)池化层

池化层的主要功能为压缩特征图,减少网络复杂程度及下一次的参数及计算量,防止过拟合,增大感受野。同时,池化的过程也会丢失一些信息,降低了分辨率。常见的池化操作有最大池化和平均池化。

-

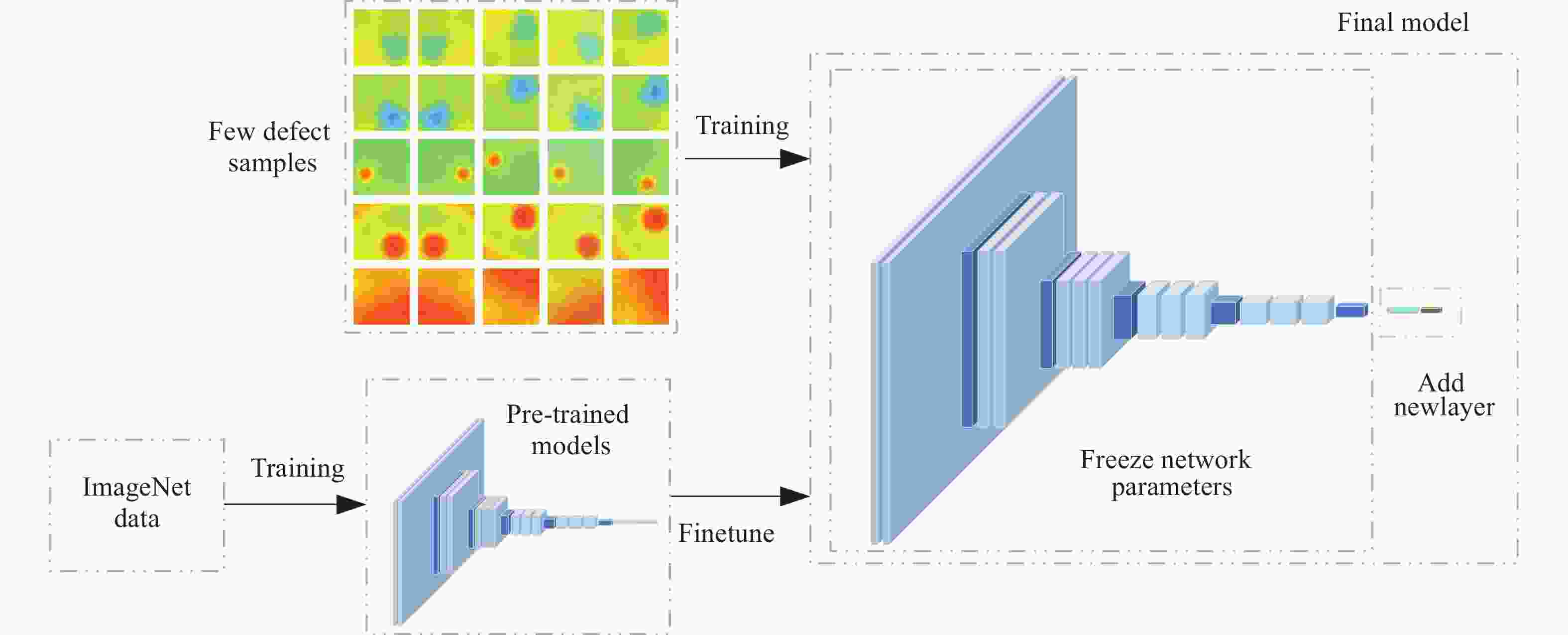

卷积神经网络浅层捕获的是一些轮廓边缘等普遍特征,在浅层学习的特征具有通用性,因此在出现样本少、不足以训练大型网络时,可以选择将已经通过大量数据训练好的网络进行迁移学习。文中将应用基于模型迁移学习中的微调技术对GFRP/NOMEX夹层结构缺陷进行分类。冻结浅层的结构,保持权重及偏置参数不变,只训练模型最后修改或添加的网络,让模型关注缺陷样本的特征,节省训练时间,增强模型泛化能力,避免直接应用小样本进行大型网络训练导致网络过拟合。

ImageNet是广泛应用于计算机视觉领域的大型数据库,其中包含了100万幅图,共1000个类别,由于其数据量庞大,且分类种类繁多,因此在ImageNet数据库经过预训练的模型具备提取缺陷特征的能力。文中将在ImageNet数据集上训练得到的多个经典预训练模型(如VGGNet[9-10],ResNet[11]等)进行微调操作,对比GFRP/NOMEX蜂窝夹层结构缺陷红外图像的分类效果。如图7所示,具体操作为冻结预训练模型的前若干层网络参数,用于图像特征提取,替换或修改后若干层的网络,如添加适合新任务的全连接层等,从而变成一个新的网络,最后使用缺陷红外数据集对微调后的模型进行训练,实现GFRP/NOMEX蜂窝夹层结构缺陷的分类。

-

VGG16由1个输入层、1个输出层、13个卷积层、5个池化层和3个全连接层组成,其中含有参数的网络共有16层,网络使用的首个卷积核大小为3×3×3,池化核大小为2×2,现对VGG16网络进行两个迁移学习对比分析分类效果,改进后的VGG-16-1模型如图8所示,删除后4层网络,冻结网络剩余层中的权重及偏置参数,依次添加维度为1×256的全连接层进行降维处理,ReLU激活函数,收敛速度快、计算复杂度低;Dropout层,如图8所示,使某些神经元以一定概率暂时从网络中丢弃,避免网络过拟合,提高模型泛化能力,使其不会过于依赖某些局部特征;由于数据集中的红外热图为5类,因此添加一个维度为1×5的全连接层;最后添加Softmax层计算分类概率,以及分类输出层,使其合并成一个新的网络。

VGG-16-2模型为删除原模型的后四层网络,并依次添加全局平均池化层、维度为1×5的全连接层、Softmax层及分类输出层。其中全局平均池化层如图8所示,是将上一层输出的三维矩阵的每个通道做平均值处理,最后得到长度为上一层输出通道数的一维矩阵,加强类别与特征图的联系,与将上一层输出的特征图直接拉成一维相比,极大程度上减少了网络参数,一定程度上抑制在后续的全连接层中发生过拟合现象。

-

MobileNetV2[12-13]是在2018年被提出的轻量化CNN,文中将MobileNetV2预训练模型应用迁移学习的微调技术,先将网络的最后一层分类器去除,并冻结网络中剩余的权重及偏置参数,依次添加Dropout层、全局平均池化层、维度为1×5的全连接层、Softmax层以及分类输出层。

-

对在ImageNet上预训练过的ResNet50、InceptionV3[14]、DenseNet201[15]网络进行微调,将预训练网络的后三层去除,并冻结网络中剩余的权重及偏置参数,添加适用于本研究预测任务的1×5全连接层、Softmax层及分类输出层。

-

训练采用的处理器为13th Gen Intel(R) Core(TM) i9-13900 HX (32 CPUs),~2.2 GHz,内存16 GB。模型初始学习率设置为0.000 05,每迭代200次衰减50%。由于计算机的GPU计算能力有限,因此为了在有限的计算能力下提高训练速度,将训练时每次的迭代处理的图像数量设置为200,即batch_size设置为200。由于训练集为4838张图片,因此每轮迭代24次,共训练50个轮次。模型梯度下降优化器均配置为SGDM,损失函数为交叉熵函数,为了判断分类模型是否出现过拟合现象,训练集进行两次迭代训练后进行一次验证,观察验证集的准确率和损失情况。

-

6种迁移学习模型的训练过程如图9所示。由曲线可知,模型在验证集上的准确率和损失率曲线整体趋势与训练集相似,表明训练过程中均未出现过拟合现象。

图 9 迁移学习模型训练过程。(a) 训练集Accuracy;(b) 训练集Loss;(c) 验证集Accuracy;(d) 验证集Loss

Figure 9. Transfer learning model training process. (a) Training set Accuracy; (b) Training set Loss; (c) Validation set Accuracy; (d) Validation set Loss

其中VGG-16-1模型的损失起始为6.437 7,经64次迭代后,训练集和验证集损失均低于0.1,经21轮迭代后稳定低于0.01,最终第50轮验证集损失达到0.001 2;模型准确率由起始的0.24经16次迭代达到90%以上,10轮迭代后训练集与验证集准确率稳定高于99%,最终50轮次时模型准确率达到99.94%。

VGG-16-2网络起始损失为2.7856,经13轮迭代后测试集与验证集损失低于0.2,最终第50轮验证集损失为0.0383,但相较于VGG-16-1网络,其训练过程中一直存在较大波动,网络起始准确率为14.5%,9轮次迭代后准确率稳定高于90%,最终第50轮次网络验证集准确率达到99.07%,尽管迭代次数达到50轮次,网络训练集Accuracy和Loss依旧不稳定。

ResNet50迁移学习模型的起始损失为1.815 7,经50次迭代后其训练集与验证集损失稳定低于0.5,经27轮迭代后稳定低于0.1,最终第50轮验证集损失达到0.0581;其起始准确率为19.15%,经76次迭代后准确率高于90%,24轮迭代后验证集与测试集准确率均稳定高于98%,最终第50轮次网络验证集准确率达到99.13%。

Densenet201迁移学习模型起始损失为2.021 1,经13轮迭代后测试集与验证集损失低于0.2,33轮迭代后稳定低于0.1,最终50轮次验证集损失达到0.076,可见网络收敛速度略慢;网络起始准确率为11%,5轮迭代后,测试集与验证集准确率高于90%,27轮迭代后稳定高于97%,最终50轮次验证集准确率达到98.57%。

MobileNetV2迁移学习模型起始损失为2.341 5,经8轮迭代后测试集与验证集损失稳定低于0.2,经31轮迭代后低于0.1,第50轮次验证集损失达到0.084 6,可见网络收敛速度较慢,且训练曲线略有波动;其网络准确率由起始的17.50%经4轮迭代后高于90%,21轮迭代后稳定高于95%,最终验证集准确率达到97.03%

InceptionV3迁移学习模型起始损失为1.938 5,经12轮迭代后网络训练集与验证集损失均低于0.5,33轮迭代后低于0.3,第50轮次验证集损失达到0.226 9,由此可知,相较于以上几种迁移学习模型Loss收敛速度慢,且网络不稳定,波动性较大;网络起始准确率为13.5%,经15轮迭代后稳定高于90%,最终50轮次迭代验证集准确率达到94.61%。

综上可知,VGG16及ResNet50迁移模型对于GFRP/NOMEX蜂窝夹层结构缺陷分类效果较优。其中VGG-16-1收敛速度快、网络稳定,且对于缺陷的分类效果最好,相较于VGG-16-1来说,VGG-16-2网络收敛效果差于VGG-16-1,且在训练后期网络依然存在较大波动,VGG16的两种网络训练消耗时间相近。ResNet50网络模型分类效果虽略差于VGG16模型分类效果,但由于ResNet50网络模型的学习参数相较于VGG16网络较少,因此其训练速度远快于VGG16。各网络具体训练消耗时间见表3。

表 3 迁移学习不同分类模型性能比较

Table 3. Performance comparison of different classification models of transfer learning

Deep learning model Learning rate Iteration cycle Training time/s VGG-16-1 0.00005 50 12500 VGG-16-2 0.00005 50 12515 ResNet50 0.00005 50 4160 Densenet201 0.00005 50 15437 MobileNetV2 0.00005 50 3765 InceptionV3 0.00005 50 6689 -

如图10所示为六种迁移学习模型对测试集数据的分类结果,其统计了GFRP/NOMEX蜂窝夹层结构缺陷热图测试集的分类情况。

-

文中采用准确率及$ \varphi $值来对迁移模型进行评估。试验任务为5分类,依次将每类别看作阳性(Positive),其余均为阴性(Negative),若样本预测为阳性且真实为阳性,则将其称为真阳性(Ture Positive),若预测为阳性而真实为阴性则称为假阳性(False Negative),真阴性与假阴性则同理。将预测为阳性的样本中存在多少真实阳性样本称之为精确率(Precision),将样本中的实际阳性存在多少被预测正确称之为召回率(Recall),其数学表达式如公式(2)~(3)所示。Accuracy为准确率,即对于整体而言,预测正确的比率。其数学表达式如公式(4)所示。值为精确率和召回率的调和平均数,其数学表达式如公式(5)所示。

$$ Pr {{e}}cision = \frac{{Ture\;\; Positive}}{{Ture \;\;Positive + False\;\; Positive}} $$ (2) $$ {Re} call = \frac{{Ture\;\;Positive}}{{Ture\;\;Positive + False\;\;Negative}} $$ (3) $$ \begin{gathered} Accuracy = \frac{{Ture\;\;Positive + Ture\;\;Negative}}{{Ture\;\;Positive + False\;\;Positive + Ture\;\;Negative + False\;\;Negative}} \end{gathered} $$ (4) $$ \varphi = \frac{{2 \times Pr ecision \times {Re} call}}{{Pr ecision + {Re} call}} $$ (5) 各模型在对GFRP/NOMEX蜂窝夹层结构缺陷红外热图5类别分类任务上的$ \varphi $值得分及准确率见表4。其中VGG16和ResNet50迁移学习模型在所有类别的分类中均表现较好,其$ \varphi $值均在95%以上,准确率98%以上,可以有效完成对于GFRP/NOMEX蜂窝夹层结构缺陷的分类任务。其中VGG-16-1仅在堵胶缺陷与积水缺陷的分类中出现预测错误,分类效果最优。除了网络分类效果的因素外,导致产生分类错误的原因还有网络训练的样本量较小,可以通过获取大量的训练数据和提供大量的此类图像对网络进行训练,有效提高分类效果,从而解决误分类问题。

表 4 各迁移学习模型针对每种缺陷的$ \varphi $值及准确率

Table 4. The $ \varphi $ value and accuracy of each transfer learning model for each defect

Transfer learning model $ \varphi $ value of different defects types Predictive Accuracy Plugging glue defects Water accumulation defects Bonding defects 1 Bonding defects 2 Health VGG-16-1 99.80% 99.90% 100% 100% 100% 99.94% VGG-16-2 100% 99.79% 99.88% 97.72% 95.53% 99.10% ResNet50 99.20% 99.29% 99.40% 98.51% 96.99% 98.95% DenseNet201 98.61% 99.39% 99.52% 96.88% 93.96% 98.33% MobileNetV2 97.64% 98.48% 98.30% 97.83% 95.45% 98.01% InceptionV3 94.32% 96.58% 98.18% 93.29% 83.09% 94.85% -

文中采用的VGG16、ResNet50、DenseNet201、MobileNetV2、InceptionV3的微调迁移学习模型均对GFRP/NOMEX蜂窝夹层结构缺陷实现了较好的分类,其中基于VGG16、ResNet50的微调模型效果优于其余几种经典模型的迁移学习网络,其准确率分别达到99.94%、99.10%、98.95%。5类别$ \varphi $值得分均高于96%。对比VGG16网络的两种微调模型,其中VGG-16-1的准确率及$ \varphi $值均高于VGG-16-2,网络收敛速度快且稳定,达到了较好的分类效果,ResNet50虽整体得分不及VGG-16-1,但其网络训练速度快,同时也可以达到较好的分类效果。综上表明,通过对预训练的经典卷积神经网络模型进行微调的迁移学习操作,可以在降低训练成本、提高训练速度的前提下针对GFRP/NOMEX蜂窝夹层结构不同类型缺陷实现较好的分类,同时也可以避免由于样本不足直接对经典网络进行训练出现过拟合或欠拟合的现象。为GFRP/NOMEX蜂窝夹层结构缺陷的定量化研究提供了一定的参考借鉴。

Infrared thermal imaging detection and defect classification of honeycomb sandwich structure defects

-

摘要: 为实现GFRP/NOMEX蜂窝夹层结构常见缺陷的准确分类,基于红外热成像无损检测技术,采用卷积神经网络及迁移学习技术建立GFRP/NOMEX蜂窝夹层结构缺陷的分类模型,比较微调的VGG16、MobileNetV2、ResNet50、InceptionV3、DenseNet201模型对于缺陷的分类效果,结果表明以上模型针对GFRP/NOMEX蜂窝夹层结构蒙皮脱粘缺陷、夹层脱粘缺陷、积水缺陷、堵胶缺陷及健康区域均可实现准确的识别分类,其准确率均在94%以上。其中基于VGG16的两种迁移学习模型及ResNet50的迁移学习模型对于此数据集的分类效果优于其余几种经典模型的迁移学习网络,其准确率分别达到99.94%、99.10%、98.95%,五个类别$ \varphi $值得分均高于96%,可实现缺陷区域及健康区域的有效分类。Abstract:

Objective In order to realize the accurate classification of GFRP/NOMEX honeycomb sandwich structure defect types, an infrared thermal imaging detection system was built to collect heat maps of defects and healthy areas, and a GFRP/NOMEX honeycomb sandwich structure defect classification model was constructed by using convolutional neural network and transfer learning technology to realize quantitative detection of defect categories. Methods The experimental study of pulse infrared thermal imaging detection was carried out on the specimen. The training data set was constructed using the data obtained from the experiment, and the fine-tuned convolutional neural network model after transfer learning was trained to realize the quantitative detection of defect categories. Firstly, GFRP/NOMEX honeycomb sandwich structure specimens with delamination, debonding, water accumulation and glue plugging defects were prefabricated, and a pulsed infrared thermal wave detection system was built. The FLIR A655SC infrared thermal imager was used to collect the surface temperature distribution field of the specimens under pulse excitation. Secondly, the defects in the heat map are cut into 90 pixel×90 pixel, and the data are expanded by rotating 90°, 180°, 270°, horizontal flipping, vertical flipping and adding Gaussian noise operations. The pre-trained VGG16, MobileNetV2, ResNet50, InceptionV3, and DenseNet201 convolutional neural network models use transfer learning technology to fine-tune the back-layer structure of the network. Finally, the constructed data set is randomly divided into training set, verification set and test set, and the network is trained. The value $ \varphi $ and Accuracy are used as evaluation indexes to evaluate the generalization ability and classification effect of the model. Results and Discussions The VGG16, MobileNetV2, ResNet50 network, InceptionV3 and DenseNet201 network fine-tuned models based on transfer learning technology are trained (Fig.9). The VGG-16-1 network model has the fastest convergence speed, the network is stable, and the training process has no large fluctuations. At the same time, the confusion matrix is used to describe the classification results of the test set data by the six networks (Fig.10). It can be seen that the six models can realize the classification task of five categories of defects prefabricated by GFRP/NOMEX honeycomb sandwich structure. The values of $ \varphi $ and Accuracy are shown (Tab.4). The classification Accuracy of VGG16 and ResNet50 fine-tuned models reaches 99.94%, 99.10% and 98.95% respectively, and the scores of five categories of $ \varphi $ are all higher than 96%. Compared with the two fine-tuning models of VGG16 network, the Accuracy and value of VGG-16-1 are higher than those of VGG-16-2. VGG-16-1 has only one misjudgment for the 1 612 defect data of the test set, and the network convergence speed is fast and stable, achieving a better classification effect. Although the overall score of ResNet50 is not as good as VGG-16-1, its network training speed is fast and can also achieve better classification effect. Conclusions The data set is constructed by using the real infrared images collected by the infrared thermal imager detection test, and the data is expanded for small samples. Based on the transfer learning technology, the network model structures of VGG16, MobileNetV2, ResNet50, InceptionV3 and DenseNet201 are fine-tuned, and the stability and convergence speed of the training process are compared and analyzed. Besides, the performance of the network was evaluated using a test set that did not participate in the training. The results show that by fine-tuning the transfer learning operation of the pre-trained classical convolutional neural network model, different types of defects of GFRP/NOMEX honeycomb sandwich structure can be well classified, and the quantitative detection of defect categories can be accurately realized. -

表 1 试件缺陷类型

Table 1. Defects type of specimen

Column test

specimenThe first

columnThe second column The third column The fourth column #A1 Water accumulation defects Plugging

glue defectsPlugging

glue defectsPlugging

glue defects#A2 Water accumulation defects Plugging glue defects Bonding

defects 1- #A3 Water accumulation defects Bonding

defects 2Bonding

defects 1- 表 2 红外图像数据集缺陷种类样本数

Table 2. The number of samples of different types of defects in the infrared image data set

Types of defects Plugging glue defects Water accumulation defects Bonding defects 1 Bonding defects 2 Health Sample size 2457 2079 1512 756 1260 Proportion 30.5% 25.8% 18.8% 9.4% 15.6% 表 3 迁移学习不同分类模型性能比较

Table 3. Performance comparison of different classification models of transfer learning

Deep learning model Learning rate Iteration cycle Training time/s VGG-16-1 0.00005 50 12500 VGG-16-2 0.00005 50 12515 ResNet50 0.00005 50 4160 Densenet201 0.00005 50 15437 MobileNetV2 0.00005 50 3765 InceptionV3 0.00005 50 6689 表 4 各迁移学习模型针对每种缺陷的$ \varphi $值及准确率

Table 4. The $ \varphi $ value and accuracy of each transfer learning model for each defect

Transfer learning model $ \varphi $ value of different defects types Predictive Accuracy Plugging glue defects Water accumulation defects Bonding defects 1 Bonding defects 2 Health VGG-16-1 99.80% 99.90% 100% 100% 100% 99.94% VGG-16-2 100% 99.79% 99.88% 97.72% 95.53% 99.10% ResNet50 99.20% 99.29% 99.40% 98.51% 96.99% 98.95% DenseNet201 98.61% 99.39% 99.52% 96.88% 93.96% 98.33% MobileNetV2 97.64% 98.48% 98.30% 97.83% 95.45% 98.01% InceptionV3 94.32% 96.58% 98.18% 93.29% 83.09% 94.85% -

[1] Guo Xingwang. Comparative study on pulsed thermography and modulated thermography of composite honeycomb panels [J]. Acta Materiae Compositae Sinica, 2012, 29(2): 172-179. (in Chinese) [2] Fan Weiming. Research on the technology of GFRP/NOMEX honeycomb sandwich structure defects detection using infrared thermal wave testing method[D]. Harbin: Heilongjiang University of Science and Technology, 2022. (in Chinese) [3] Wei Jiacheng, Liu Junyan, He Lin, et al. Recent progress in infrared thermal imaging nondestructive testing technology [J]. Journal of Harbin University of Science and Technology, 2020, 25(2): 64-72. (in Chinese) [4] Bu Chiwu, Zhao Bo, Liu Tao, et al. Barker coded thermal wave detection and matched filtering for defects in CFRP/Al honeycomb structure [J]. Infrared and Laser Engineering, 2021, 50(10): 20210050. (in Chinese) [5] Li Yanhong, Jin Wanping, Yang Danggang, et al. Thermal wave nondestructive testing of honeycomb structure [J]. Infrared and Laser Engineering, 2006, 35(1): 45-48. (in Chinese) [6] LeCun Y, Bengio Y, Hinton G. Deep learning [J]. Nature, 2015, 521(7553): 436-444. doi: 10.1038/nature14539 [7] Pan S J, Yang Q. A survey on transfer learning [J]. IEEE Transactions on Knowledge and Data Engineering, 2010, 22(10): 1345-1359. doi: 10.1109/TKDE.2009.191 [8] Yosinski J, Clune J, Bengio Y, et al. How transferable are features in deep neural networks? [C]//NIPS, 2014, 2: 3320-3328. [9] Simonyan K, Zisserman A. Very deep convolutional networks for large-scale image recognition [C]// ICLR, 2015: 1-14. [10] Xu Jinghui, Shao Mingye, Wang Yichen, et al. Recognition of corn leaf spot and rust based on transfer learning with convolutional neural network [J]. Transactions of the Chinese Society for Agricultural Machinery, 2020, 51(2): 230-236, 253. (in Chinese) [11] He K, Zhang X, Ren S, et al. Deep residual learning for image recognition [C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2016: 770-778. [12] Feng Xiao, Li Dandan, Wang Wenjun, et al. Image recognition of wheat leaf diseases based on lightweight convolutional neural network and transfer learning [J]. Journal of Henan Agricultural Sciences, 2021, 50(4): 174-180. (in Chinese) [13] Sandler M, Howard A, Zhu M, et al. Mobilenetv2: Inverted residuals and linear bottlenecks[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2018: 4510-4520. [14] Szegedy C, Vanhoucke V, Ioffe S, et al. Rethinking the inception architecture for computer vision [C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2016: 2818-2826. [15] Cai Peng, Yang Lei, Luo Junli. Fabric defect detection method based on fusion of convolutional neural network models [J]. Journal of Beijing Institute of Fashion Technology (Natural Science Edition), 2020, 40(1): 55-62. (in Chinese) -

下载:

下载: