-

对农作物种植结构相关信息的获取、种植结构时空变化信息的掌握,能够为我国的农业建设与发展提供重要的理论依据,对我国农业的生产与发展具有深远意义[1~3]。

伴随遥感技术的不断进步,通过遥感影像进行大范围的农作物分类研究成为可能[4]。应用常规的分类方法对遥感数据进行提取,通常难以对影像涵盖的高维特征进行利用,分类效果不佳。深度学习方法能够学习图像更深层特征,对其进行有效提取,并根据目标要求进行决策分类,近年来其应用范围得到进一步拓宽,其中比较具有代表性的包括目标提取、场景解译等[5],为得到更好的无人机遥感图像分类结果带来新的途径[6]。基于该优势,更多专家学者采取该网络研究遥感图像分类问题,其中对于遥感图像上特种复杂的耕地图形,以李昌俊等[7]人为典型代表,对数据的提取时间不同、且耕地土壤土质未均匀分布等因素,采用卷积神经网络研究方式提取遥感影像上的耕地类型信息,对其训练与优化后可提高模型提取精准度为86.7%,与传统支持向量机相比,其识别精准度只有73.9%,在精准性方面取得一定提高;顾炼等[8]人提出与高分辨率遥感影像相结合,充分运用该图像上的丰富信息,提取城市建筑物目标信息时使用U-Net模型,对网络结构进行调整以及优化参数,通过该模型提取该区域建筑物获得的F1得分高达0.943;陈杰等[9]提出了一类以最优尺度选择为基础的农田提取技术,主要处理丘陵区域农田在形态与光谱上的区别;鲁恒等[10]使用迁移学习方式提取小面积耕地图像,依靠无人机遥感图像来获得高空间分辨率和各种类型的纹理信息,通过这种方法,能够达到91.9%的识别精度,与此同时,识别地块的连续性和完整性具有优良的效果;通过无人机作为飞行平台,朱秀芳等[11]对农田覆膜特征进行充分利用,采集研究区上的遥感影像,提取遥感图像上的农田信息,精准度最高为94.84%。由于农作物在生长期比较相似,两种农作物边缘的像素点接近,传统分类方法在边缘检测上特征的提取不是很准确。

基于上述背景,文中对农业遥感技术进行研究,并应用到农作物种植结构识别方面。采用卷积神经网实现对种植结构的高效识别,通过对传统U-Net网络的改进,能够较好地实现不同区域内的种植结构的信息获取,高效准确地提取对应的信息,为农业的快速发展提供重要的技术手段,为国家的三农政策提供支撑。

-

文中所选的研究区位于贵州省兴仁市,如图1所示。数据源自百度点石大赛。兴仁市为暖温冬干型气候,在各类因素影响下,气候特征呈北亚热带高原型温和湿润季风,夏季无高温,冬季无低温,无霜期较长,雨热同季。因此,气候和雨量适合农作物的生长。

-

文中研究采用的数据集主要源自无人机遥感图像,其涵盖了4张具有标注的遥感图像,4张图像的规格存在一定差异,其规格的极大值为55128×49447。对于这个数据集而言,其涵盖了多种物体:薏仁米、玉米、建筑物以及其他背景。其标注情况如图2所示,绿色区域为玉米,黄色区域为薏仁米,红色区域为建筑物,黑色区域为其他背景。

在进行深度学习训练时训练样本数量过少会出现过拟合现象,即模型过度对训练集上的数据拟合,降低训练集上预测精准性[12],目前获取的训练集数据量较少,因此通过数据增广方式训练实验上的样本数据集,使用对数据集进行翻转变换、上下左右平移变换、随机裁切几种方式的增广,扩展数据集数量。

将数据集经过预处理操作后重新分类数据,其中80

${\text{%}} $ 为训练集,剩余20${\text{%}} $ 为验证集,由于最大的图片尺寸有55128×49447,无法直接用于训练,为了满足GPU的显存和后续实验对图像尺寸的要求,对原图片进行了裁剪,将其裁剪成小块作为训练集。文中采用的裁剪方法为在原图上滑动进行裁剪,第一次裁剪图片的大小为8000×8000,对于完全透明的地方直接略过,然后对得到的数据集进行第二次裁剪,裁剪后图片的大小是1024×1024,为确保数据的全覆盖,选取重叠区域为128×128,每滑动128个像素切割一块。最终得到12168张图片,其中的9735张作为训练集,大小为1024×1024×8,2433张作为验证集,大小为1024×1024×8,大大增加了样本数量,满足了实验的要求。 -

由于U-Net网络的特征融合只是用到contact,考虑到遥感图像数据固有的大数据的4V特性,即:快速处理速度(velocity)、高值但低密度(value)、多样(variety)、量大(volume),因此文中选择M2 DET网络中的尺度特征聚合模块SFAM与之相融合,SFAM的主要目的是将多级多尺度特征进行有效聚合,从而得到多级特征金字塔,可以获取更多的语义信息,使结果更精确[13]。SFAM的首个阶段主要顺着信道维度将目标特征进行有效衔接,此时聚合金字塔内各比例都涵盖多级深度的特征。但是,简单的连接操作不太适合。在第二部分重点采用了通道注意模块,从而使特征都涵盖于最有益的区间。在SE区块之后,可以通过全局平均池化来获得通道统计z∈RC。除此之外,为了对通道依赖性进行准确捕获,聚合模块可以进行调整。

SFAM 的首个阶段是沿着信道维度与存在等效比例的特征进行衔接,此后通过SE注意机制对目标特征进行良好的适应,如公式(1)所示:

$$ s={F}_{ex}({z},W)=s\left({W}_{2}d({W}_{1}{z})\right) $$ (1) 式中:W1∈RC/r'×C,W2∈RC×C/r',r'是减少的比例。最后通过激活函数对输入X重新加权得到输出,如公式(2)所示:

$$ {X_i} = {F_{scale}}\left(X_i^c,{s_c}\right) = {s_c}*X_i^c $$ (2) -

传统的U-Net网络是对称结构[14],左侧是编码器,右侧是解码器,在提取特征时要完成10次3×3标准卷积操作,因遥感图像存在复杂的背景,信息繁多,光谱区间广,故此基础U-Net模型提取遥感图像特征欠佳,因此此次研究经由提高基础U-Net网络深度完成对复杂度更高的光谱特征的提取,从而提升检测的精度。在原有的网络结构上加深两层编码器与解码器,右、左半部从而依然可呈中心对称,因左半部的构成为一连串上采样,故其核心对应于下采样,各层输入不仅含源自上一层的特征信息,同时含源自相应下采样层的环境信息,从而可恢复特征图细节,同时使其对应的空间信息维度恒定。

-

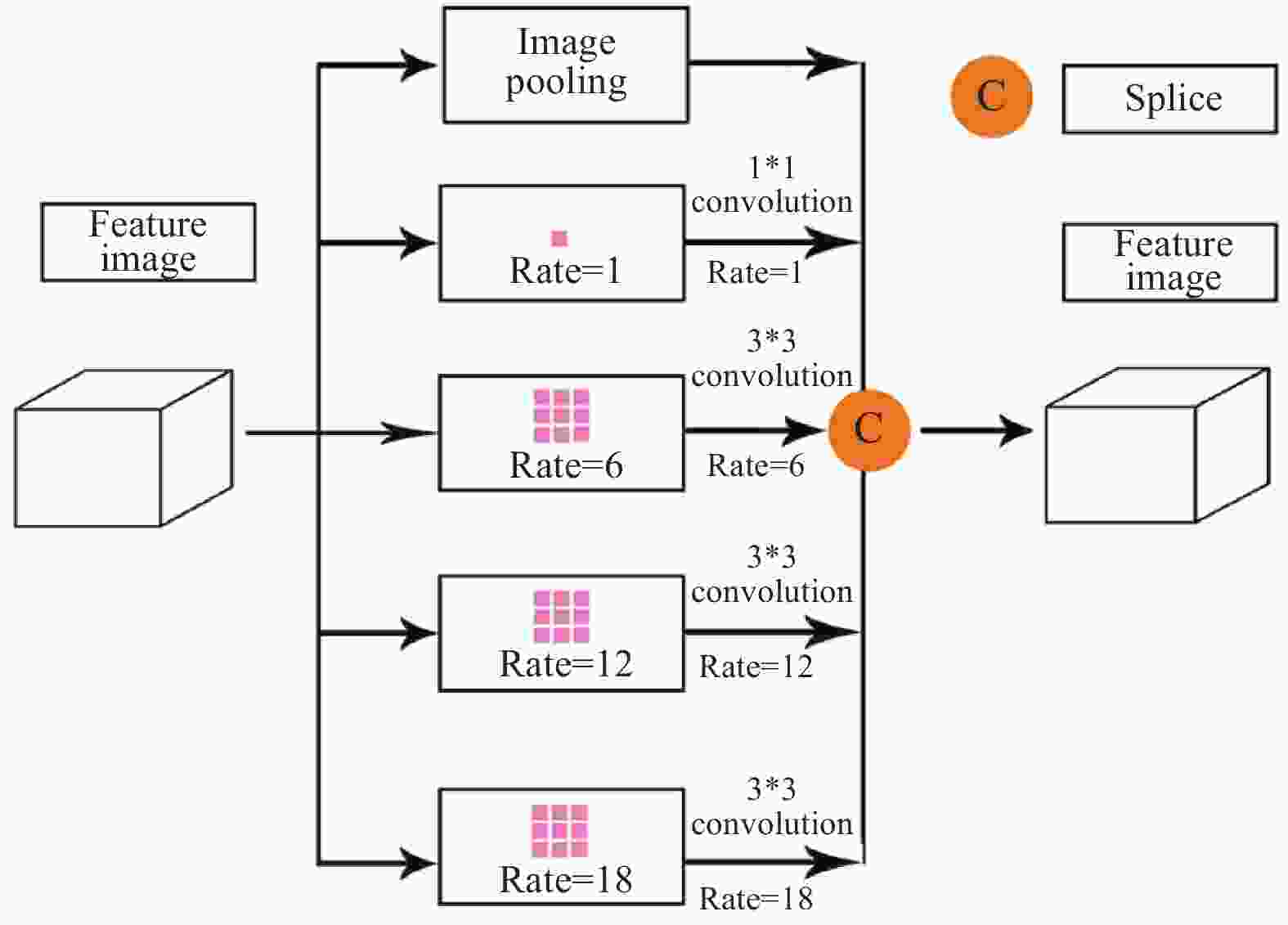

ASPP(atrous spatial pyramid pooling)模块是在空间维度上实现金字塔型的空洞池化[15]。针对ASPP模块而言,其能够为金字塔型的空洞池化提供良好的支持。此类设计对预设的输入以多种空洞卷积完成采样工作,其等同于通过若干比例捕捉图像的相关数据。利用shortcut将ASPP模块所产生的输入和输出实现加叠,这种方式并不会显著提升网络的计算量,但是它却能够有效增加模型的训练效率,并对实际的训练效果进行一定优化,在模型参数不断加深的过程中,该结构可以有效地对退化问题进行处理。ASPP结构如图3所示,具体计算如公式(3)所示:

$$ Y = Concat\left({I_{pooling}}(X),{H_{1.3}}(X),{H_{6.3}}(X),{H_{12.3}}(X),{H_{18.3}}(X)\right) $$ (3) 式中:Concat()为拼接操作第一维度上的特征图;Hr.n(X)表示n尺寸卷积核与r采样率的带孔卷积;Ipooling为

图3上image pooling分支的所有图像级特征,是一种将特征图输入的平均池化特征。 -

文中改进的农作物分类模型的整体架构如图4所示,其涵盖了U-Net的基本结构,一方面在拼接层中加入SFAM结构,使获取到的语义信息更加丰富;另一方面在最下层编码器与解码器彼此衔接的位置,添加ASPP结构,因此,可在多个采样率条件下产生上下文语义信息,更好地识别像素,从而保证分类效果最为理想,并且在原始U-Net结构上加深了两层,此处U-Net的对称结构又进行了一定扩增,这是由于相对较多的层数能够形成更多的语义信息,最后获得的特征就更为全面,即保证了加深之后不会出现梯度爆炸的情况,并对遥感图像农作物进行标准化的分类和识别。首先,输入图片,运用卷积进行下采样特征提取,通过SFAM结构,使得语义信息更为丰富,然后通过ASPP模块使下采样提取的特征更为丰富,防止加深的网络出现梯度爆炸,最后在右侧进行反卷积,得出结果图片。

-

文中选取实验环境搭载ENVI5.3遥感数据处理软件,与图片处理软件Matlab 2017。深度学习服务器的CPU为IntelXeon E5-2678 v3 CPU,其主频最高为2.5 GHz,显卡是NVIDIA(英伟达)GTX TITAN XP 12 G(泰坦系列),核心频率最高1582 MHz,3840个CUDA核心,服务器运行内存64 G,4 T储存空间。实验模型选择Adam作为优化器,该优化器可以在训练过程中自适应地调整学习率,设置初始学习率为0.01,设置batch size为16,迭代周期为100次,选取TensorFlow深度学习框架,模型构建完成后,将9735张训练图像和2433张验证图像存储在Numpy数组中以方便实验。

-

为更为深入地分析文中改进网络模型农作物类型提取环节的具体优势与劣势,选择现今广泛应用的U-Net、Segnet和FCN算法分别进行识别效果比较。对于研究区内一处区域,借助4类技术开展提取测试,就这4类技术提取效果的精度、完整度与准确度展开统计、对比。

-

有效且合理的分类结果评价技术能够更为全面、客观地评估分类结果,有助于验证分类方法的准确性与有效性。文中选取总体精度和交并比作为实验的分类精度评价指标。

基于遥感影像在分类对象与标准上的不同,分别通过总体精度(OA)、Accuracy (准确度A)、Precision (精确度P)与Recall (召回率R)开展量化评估[16]。

基于卷积神经网络(CNN)对遥感影像各图像块的分类结果,对错误、正确分类的目标地物像素量展开统计,将OA当作遥感地物分类评估指标,当作一类常用的精度评估指标,OA代表各像素准确分类的概率,以下为计算公式:

$$ OA = \frac{1}{{{N_{{\text{total}}}}}}\sum\nolimits_{K - 1}^K {{N_{KK}}} $$ (4) 式内:NKK、Ntotal分别代表图像内像元被准确分类的数量、图像内像元的总量;将卫星遥感影像分类提供的地块对象当做基本单元,可通过Accuracy、Precision与Recall来量化评价分类效果,以下为计算公式:

$$ A = \frac{{TP + TN}}{{TP + FN + FP + TN}} $$ (5) $$ P = \frac{{TP}}{{TP + FP}} $$ (6) $$ A = \frac{{TP}}{{TP + FN}} $$ (7) 式中:TP、FP、FN、TN依次所指为准确识别的此类田块数目、错误识别的此类田块数目、此类田块被识别成其他田块的数目、准确识别的其他田块数目;均交并比(MIoU)[17],代表的是语义分割的标准度量,它能够用于分析两集合的交集和并集之比,针对语义分割问题而言,其分别为真实值(ground truth)和预测值(predicted segmentation)。这个比例可以变形为TP (交集)比上TP、FP、FN之和(并集)。在每个类上计算IoU,然后取平均值。

$$ MIoU = \dfrac{1}{{k + 1}}\sum\nolimits_{i = 0}^k {\frac{{{p_{ii}}}}{{\displaystyle\sum\nolimits_{j = 0}^k {{p_{ij}} + \displaystyle\sum\nolimits_{j = 0}^k {{p_{ji}} - {p_{ii}}} } }}} $$ (8) -

为进一步探索文中方法在农作物分类中的适用性,将文中方法与U-Net、SegNet、FCN进行识别对比实验,通过与U-Net模型进行对比来总结文中的改进想法是否成立,同时,FCN和SegNet网络是经典的图像识别网络,具有一定的代表性,与其对比,可以使文中的结果更有说服力。模型的特点如表1所示。以总体分类精度和MIoU作为评估指标。为验证改进U-Net网络的稳定性,比较各类方法的总体分类精度结果如表2所示。

表 1 不同深度学习网络模型特点

Table 1. Characteristics of network model for different deep learning

Deep learning segmentation model Model characteristics FCN For the first time, a fully convolutional network based on the end-to-end concept is proposed, which removes the fully connected layer and samples in the deconvolutional layer SegNet The pooling layer result is used in the decoding and a large amount of coding information is introduced U-Net Based on the end-to-end standard network structure, the decoder is obtained by splicing the results of each layer on the encoder, and the result is more ideal Improve U-Net The ability of semantic recognition is enhanced, and it is more sensitive to feature extraction 表 2 不同深度学习网络农作物识别方法对比实验结果

Table 2. Experimental results of crop recognition with different methods

Experimental

networkU-Net SegNet FCN Improve U-Net OA MIoU OA MIoU OA MIoU OA MIoU Precision 85.41% 0.39 84.86% 0.39 86.44% 0.45 88.33% 0.52 -

通过深度学习模型的农作物分类识别实验以及精度评价,基于模型训练得到的FCN、SegNet、U-Net和改进的U-Net模型的农作物分类识别的模型,输入测试集图像,得到农作物分类结果;运用MIoU和总体分类精度两种精度评定指标对文中研究的分类结果进行定量的精度评价;结果表明,在相同的样本库进行模型训练的场景下,从总体分类精度来看:文中改进的U-Net模型的总体分类精度达到88.83%,从交并比来看,文中改进的U-Net模型在MIoU上达到0.52,均高于传统机器学习算法,说明文中改进的U-Net模型能够有效应用于农作物分类识别场景,从分类结果可以看出:薏仁米种植面积很集中,样本采集也较多,所以在四种模型中表现出来的分类精度很高,从而可以看出不同类别的样本的数量与样本分布情况也是可能影响农作物分类精度的重要因素。改进后U-Net是基于图像语义进行分类的深度学习模型,其特征识别能力得到了一定的强化,既运用了薏仁米表现在影像上自身的特征信息,同时也结合了围绕在其周围的像素进行识别和分类,所以其准确性和可靠性更高。

文中方法与其他算法部分实验结果对比如图5所示。

-

无人机遥感的监测范围能覆盖了精准农业中所关心的田块尺度,且有效获取农作物的分类识别信息,卫星向着便捷性、灵活性良好的高空遥感平台方向发展,可有效促进遥感成像技术的成熟,从而丰富影像上的时间信息、光谱信息与空间信息类型,并在预防自然灾害、调查过度资源、保护海洋环境及监测农业信息等领域广泛应用。通过遥感方式探测目标区域,不依赖于人工实地调研,减弱工作人员工作的复杂性与难度,由此增进生产进度与提高作业效率。而怎样精准地对遥感影像展开分类是业界学者广泛关注的课题。文中研究先是对相关技术展开梳理和分析,其中比较具有代表性的包括农业信息调查技术、深度学习技术等,基于此与卷积神经网络技术、遥感图像数据特征相结合,研究遥感影像精细提取农作物信息技术,验证了深度学习技术的有效性与准确性。文中的研究工作主要有:

(1)文中获取了研究区的无人机遥感图像,并对图像进行标签处理,得到了基于薏仁米、玉米这两种典型农作物的样本库,并且采用图像增广的方式扩大了实验的数据,使模型更具有泛化能力。

(2)针对传统U-Net网络的问题进行改进,引入ASPP模块和SFAM模块提高U-Net模型在特征提取方面的精确度,同时加深了网络结构的层数,将改进后的模型用于遥感图像农作物分类检测算法,实现对农作物分类情况的监测。使模型多级多尺度特征聚合成多级特征金字塔,提高了模型对农作物像素的敏感性,能够更加准确地获取遥感图像的语义信息。

(3)与U-Net模型、FCN模型和SegNet模型对比,改进后的U-Net模型OA达到了88.33%,MIoU达到0.52,在分类指标上都有明显的提升,可以看出:改进后的模型相比较传统的深度学习模型能更为准确地检测出遥感图像中的相近农作物像素点,验证了对U-Net算法改进的可行性和有效性。

Research on the classification of typical crops in remote sensing images by improved U-Net algorithm

-

摘要: 针对传统算法提取遥感图像分类特征不全,及识别农作物分类准确率不高的问题,以无人机遥感图像为数据源,提出改进U-Net模型对研究区域薏仁米、玉米等农作物进行分类识别。实验中首先对遥感影像进行预处理,并进行数据集标注与增强;其次通过加深U-Net网络结构、引入SFAM模块和ASPP模块,多级多尺度特征聚合金字塔方法等对算进行法改进,构建改进的U-Net算法,最后进行模型训练与改进修正。实验结果表明:总体分类精度OA达到88.83%,均交并比MIoU达到0.52,较传统U-Net模型、FCN模型和SegNet模,在分类指标和精度上都有明显的提升。Abstract: Aiming at the problem of incomplete classification features of remote sensing images extracted by traditional algorithms and low accuracy of crop classification, we use drone remote sensing images as the data source and propose an improved U-Net model to classify and recognize crops such as barley, corn, etc. in the study area. In the experiment, the remote sensing image is preprocessed, and the data set is labeled and enhanced. Secondly, the algorithm is improved by deepening the U-Net network structure, introducing the SFAM module and the ASPP module, and using the multi-level and multi-scale feature aggregation pyramid method to construct an improved U-Net algorithm. Finally model training and improvement are completed. The experimental results show that the overall classification accuracy OA reaches 88.83%, and the combined ratio of MIoU reaches 0.52. Compared with the traditional U-Net model, FCN model and SegNet model, the classification index and accuracy are significantly improved.

-

Key words:

- deep learning /

- crop classification /

- drone remote sensing /

- improved U-Net model

-

表 1 不同深度学习网络模型特点

Table 1. Characteristics of network model for different deep learning

Deep learning segmentation model Model characteristics FCN For the first time, a fully convolutional network based on the end-to-end concept is proposed, which removes the fully connected layer and samples in the deconvolutional layer SegNet The pooling layer result is used in the decoding and a large amount of coding information is introduced U-Net Based on the end-to-end standard network structure, the decoder is obtained by splicing the results of each layer on the encoder, and the result is more ideal Improve U-Net The ability of semantic recognition is enhanced, and it is more sensitive to feature extraction 表 2 不同深度学习网络农作物识别方法对比实验结果

Table 2. Experimental results of crop recognition with different methods

Experimental

networkU-Net SegNet FCN Improve U-Net OA MIoU OA MIoU OA MIoU OA MIoU Precision 85.41% 0.39 84.86% 0.39 86.44% 0.45 88.33% 0.52 -

[1] Liu Yanwen, Liu Chengwu, He Zongyi, et al. Delineation of basic farmland based on local spatial autocorrelation of farmland quality at pixel scale [J]. Transactions of the Chinese Society of Agricultural Machinery, 2019, 50(5): 260-268, 319. (in Chinese) [2] 朱琳. 基于Sentinel多源遥感数据的作物分类及种植面积提取研究[D]. 西北农林科技大学, 2018. Zhu Lin. Research on crop classification and planting area extraction based on Sentinel multi-source remote sensing data[D]. Xianyang: Northwest Sci-tech University of Agriculture and Forestry, 2018. (in Chinese) [3] He Zhangzheng. Remote sensing technology and its application analysis in land and space planning [J]. Real Estate, 2019(11): 44-45. (in Chinese) [4] 元晨. 高空间分辨率遥感影像分类研究[D]. 长安大学, 2016. Yuan Chen. Research on classification of high spatial resolution remote sensing images[D]. Xi'an: Chang'an University, 2016. (in Chinese) [5] Guo Jing, Jiang Jie, Cao Shixiang. Level set hierarchical segmentation of buildings in remote sensing images [J]. Infrared and Laser Engineering, 2014, 43(4): 1332-1337. (in Chinese) [6] 张诗琪. 基于深度学习的无人机遥感影像农作物分类识别[D]. 成都理工大学, 2020. Zhang Shiqi. Crop classification and recognition based on deep learning for UAV remote sensing images [D]. Chengdu: Chengdu University of Technology, 2020. (in Chinese) [7] Li Changjun, Huang He, Li Wei. Research on cultivated land extraction technology of agricultural remote sensing image based on support vector machine [J]. Instrument Technology, 2018(11): 5-8, 48. (in Chinese) [8] 顾炼. 基于深度学习的遥感图像建筑物检测及其变化检测研究[D]. 浙江工商大学, 2018. Gu Lian. Research on remote sensing image building detection and change detection based on deep learning [D]. Hangzhou: Zhejiang Gongshang University, 2018. (in Chinese) [9] Lu Heng, Fu Xiao, He Yinan, et al. Extraction of cultivated land information from UAV images based on migration learning [J]. Transactions of the Chinese Society of Agricultural Machinery, 2015, 46(12): 274-279, 284. (in Chinese) doi: 10.6041/j.issn.1000-1298.2015.12.037 [10] Zhu Xiufang, Li Shibo, Xiao Guofeng. Extraction method of film-coated farmland area and distribution based on UAV remote sensing image [J]. Transactions of the Chinese Society of Agricultural Engineering, 2019, 35(4): 106-113. (in Chinese) [11] 朱明. 卷积神经网络在高分辨率卫星影像地表覆盖分类中的应用研究[D]. 中国地质大学(北京), 2020. Zhu Ming. Application of convolutional neural network in classification of high-resolution satellite image land cover[D]. Beijing: China University of Geosciences (Beijing), 2020. (in Chinese) [12] Yang Nan, Nan Lin, Zhang Dingyi, et al. Research on image description based on deep learning [J]. Infrared and Laser Engineering, 2018, 47(2): 0203002. (in Chinese) doi: 10.3788/IRLA201847.0203002 [13] 张俊. 基于图像特征和深度学习的奶牛身份识别方法的研究[D]. 吉林农业大学, 2020. Zhang Jun. Research on dairy cow identification method based on image features and deep learning [D]. Changchun: Jilin Agricultural University, 2020. (in Chinese) [14] Chen L C, Papandreou G, Kokkinos I, et al. Deeplab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected CRFs [J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2018, 40(4): 834-848. doi: 10.1109/TPAMI.2017.2699184 [15] Liu Wenxiang, Shu Yuanzhong, Tang Xiaomin, et al. Semantic segmentation of remote sensing images using dual attention mechanism Deeplabv3+ algorithm [J]. Tropical Geography, 2020, 40(2): 303-313. (in Chinese) [16] Sun Yu, Han Jingye, Chen Zhibo, et al. Aerial monitoring method of greenhouse and mulching farmland by drone based on deep learning [J]. Transactions of the Chinese Society of Agricultural Machinery, 2018, 49(2): 133-140. (in Chinese) [17] Bingwen Qiu, Weijiao Li, Zhenghong Tang, et al. Mapping paddy rice areas based on vegetation phenology and surface moisture conditions [J]. Ecological Indicators, 2015, 56: 79-86. doi: https://doi.org/10.1016/j.ecolind.2015.03.039 -

下载:

下载: